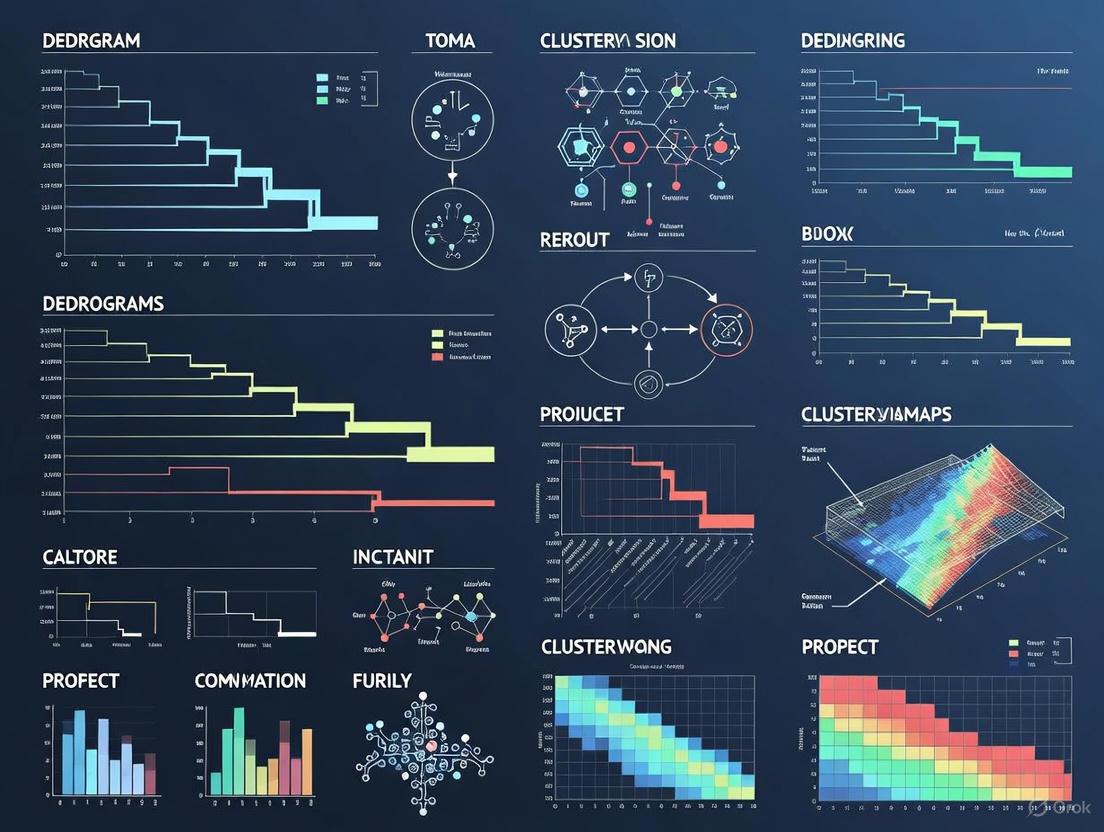

Mastering Dendrograms and Clustering in Heatmaps: A Practical Guide for Biomedical Researchers

This comprehensive guide empowers researchers, scientists, and drug development professionals to correctly interpret and implement heatmaps with hierarchical clustering.

Mastering Dendrograms and Clustering in Heatmaps: A Practical Guide for Biomedical Researchers

Abstract

This comprehensive guide empowers researchers, scientists, and drug development professionals to correctly interpret and implement heatmaps with hierarchical clustering. Covering foundational principles to advanced validation techniques, it explores the crucial choices of distance metrics and linkage methods, provides practical implementation code in R and Python, addresses common pitfalls, and introduces statistical validation and interactive tools. The article demonstrates how these powerful visualization techniques can uncover biological patterns, identify disease subtypes, and accelerate discovery in genomics, clinical research, and drug development.

Understanding the Basics: What Heatmaps and Dendrograms Reveal About Your Data

In data-driven fields such as bioinformatics, drug discovery, and genomics, researchers routinely analyze high-dimensional datasets to uncover hidden patterns. Two powerful visualization techniques have emerged as essential tools for this task: heatmaps (color-coded matrices) and dendrograms (hierarchical trees). When combined, they form a "cluster heatmap" that provides a multi-faceted view of data structure, enabling researchers to simultaneously observe patterns in the data matrix and the hierarchical clustering of both rows and columns [1]. This integrated approach is particularly valuable for analyzing gene expression data, drug response patterns, and other complex biological datasets where both individual values and grouping relationships are critical for interpretation. The visual convergence of color representation and tree-based hierarchy creates an intuitive yet powerful analytical tool that serves as a cornerstone for exploratory data analysis in scientific research.

Core Concept Analysis: Definitions and Theoretical Foundations

Heatmaps: Visual Matrices of Data Intensity

A heatmap is a graphical representation of data where individual values contained in a matrix are represented as colors [2]. This visualization technique transforms numerical matrices into intuitive color-coded images, allowing for rapid pattern recognition that would be difficult to discern from raw numbers alone. The power of heatmaps lies in the human visual system's superior ability to distinguish colors compared to interpreting numerical values. Heatmaps are particularly appropriate when analyzing large datasets because color is easier to interpret and distinguish than raw values [2].

In scientific practice, heatmaps serve multiple visualization purposes. They commonly display gene expression levels across different experimental samples or conditions, reveal correlation patterns between variables, showcase disease incidence across geographical regions, identify hot/cold zones in spatial analyses, and represent topological information [2]. The versatility of heatmaps across these diverse applications stems from their ability to compactly summarize complex multivariate relationships in an intuitively accessible format.

Dendrograms: Hierarchical Tree Diagrams

A dendrogram (or tree diagram) is a network structure that visualizes hierarchy or clustering in data [2]. These tree-like diagrams represent the arrangement of clusters produced by hierarchical clustering, with the vertical (or horizontal) position of each branch point indicating the similarity between connected elements [3]. Dendrograms provide not only information about which data points belong together but also how close or far apart different groups are in terms of similarity, offering insights into the nested relationships and varying levels of granularity in data [3].

The structure of a dendrogram consists of leaves (individual data points) at the bottom, branches that connect points and clusters, and a root that represents the single cluster containing all data points at the top. The height at which two branches merge indicates the distance or dissimilarity between the clusters - low merge height signifies high similarity, while high merge height indicates low similarity [3]. This hierarchical representation allows researchers to understand cluster structure at multiple resolution levels, from fine-grained subgroups to broad categories.

Integrated Cluster Heatmaps

When heatmaps and dendrograms are combined, they form a "cluster heatmap" that simultaneously visualizes the data matrix and the clustering structure on both dimensions [1]. In this integrated visualization, the dendrograms positioned along the top and/or side illustrate the similarity and grouping of rows and columns, while the heatmap uses color gradients to display data intensity [4]. This combination enables researchers to correlate patterns in the data values (shown as colors) with the hierarchical grouping structure (shown by the dendrogram), facilitating deeper insights than either component could provide alone.

Table 1: Core Components of a Cluster Heatmap

| Component | Function | Visual Elements |

|---|---|---|

| Heatmap Matrix | Displays data values | Color-coded cells where color intensity represents value magnitude |

| Row Dendrogram | Shows clustering of row entities | Tree diagram along rows displaying hierarchical relationships |

| Column Dendrogram | Shows clustering of column entities | Tree diagram along columns displaying hierarchical relationships |

| Color Legend | Interprets color encoding | Scale relating colors to numerical values |

| Annotation | Adds metadata | Colored bars labeling groups or conditions |

Mathematical Foundations: Distance, Linkage, and Clustering

Distance Metrics for Clustering

At the heart of dendrogram construction lies the concept of dissimilarity or distance between data points. The choice of distance metric significantly influences the resulting dendrogram structure and must be carefully selected based on data characteristics and analytical goals [3].

Table 2: Common Distance Metrics in Hierarchical Clustering

| Metric | Formula | Best Use Cases |

|---|---|---|

| Euclidean | d(x,y) = √Σ(xᵢ - yᵢ)² | Continuous, normally distributed data; sensitive to scale |

| Manhattan | d(x,y) = Σ|xᵢ - yᵢ| | Grid-like or high-dimensional sparse data |

| Cosine | 1 - (x·y)/(|x||y|) | Text or document clustering where magnitude doesn't matter |

| Correlation | 1 - Pearson correlation | Data where pattern similarity matters more than absolute values |

Euclidean distance represents the straight-line distance in feature space and is ideal for continuous, normally distributed data, though it is sensitive to scale variations [3]. Manhattan distance sums the absolute differences along each dimension, making it useful for grid-like or high-dimensional sparse data such as text features. Cosine similarity (often converted to distance) measures the angle between vectors rather than magnitude differences, making it particularly valuable for text mining or document clustering where the direction of the vector matters more than its length [3].

Linkage Criteria

Once distances between individual points are computed, linkage criteria determine how to measure dissimilarity between clusters (sets of points). This choice fundamentally shapes the dendrogram's branching pattern and the resulting cluster properties [3].

Table 3: Linkage Methods in Hierarchical Clustering

| Method | Formula | Cluster Characteristics |

|---|---|---|

| Single Linkage | d(A,B) = min d(a,b) | Promotes chaining; can handle non-spherical shapes |

| Complete Linkage | d(A,B) = max d(a,b) | Produces compact, spherical clusters; sensitive to outliers |

| Average Linkage | d(A,B) = (1/|A||B|) ΣΣ d(a,b) | Balanced approach; less prone to extremes |

| Ward's Method | d(A,B) = √[(2|A||B|)/(|A|+|B|)] |μₐ-μ₈|² | Statistically robust; minimizes variance increase |

Single linkage, also known as nearest neighbor, measures the minimum distance between points in two clusters and can promote chaining (long, strung-out clusters) but handles non-spherical shapes well [3]. Complete linkage (farthest neighbor) uses the maximum distance between points in two clusters, producing compact, spherical clusters but showing sensitivity to outliers. Average linkage (UPGMA) takes a balanced approach by calculating the average distance between all pairs of points in the two clusters, making it less prone to the extremes of single or complete linkage [3]. Ward's method is statistically robust, minimizing the increase in total within-cluster variance after merging, and often yields particularly interpretable dendrograms for scientific data [3].

Experimental Protocols and Implementation

Workflow for Cluster Heatmap Generation

The following diagram illustrates the complete workflow for generating a cluster heatmap, from data preparation to final visualization:

Data Preprocessing and Scaling Protocol

Prior to generating a heatmap, proper data preprocessing is essential. For the airway RNA-seq dataset (a common benchmark in bioinformatics), the protocol begins with normalization to make samples comparable. The data represents normalized (log2 counts per million or log2 CPM) of count values from differentially expressed genes [2]. For many analyses, further scaling is recommended to ensure variables with large values do not dominate the clustering. A common method is z-score standardization, calculated as z = (individual value - mean) / standard deviation, which tells how many standard deviations a value is from the mean [2].

The scaling protocol involves:

- Data Transformation: Apply logarithmic transformation to reduce skewness in data distributions, particularly for gene expression values [2]

- Normalization: Adjust for technical variations between samples using methods like CPM (counts per million) for sequencing data [2]

- Standardization: Apply z-score transformation either by rows, columns, or both to ensure comparability [2]

- Missing Value Imputation: Address missing data using appropriate methods (k-nearest neighbors, mean imputation) specific to the data type

Failure to properly scale data can lead to misleading clusters, as variables with larger scales will disproportionately influence distance calculations [2].

Dendrogram Construction Methodology

The construction of dendrograms typically follows the agglomerative hierarchical clustering algorithm, which builds the tree bottom-up [3]. The formal algorithm consists of:

- Initialization: Treat each of the n data points as a singleton cluster. Compute the n×n distance matrix D using the chosen metric [3]

- Iterative Merging: Identify the two clusters with the smallest distance based on the linkage criterion and merge them into a new cluster [3]

- Distance Update: Update the distance matrix to reflect distances between the new cluster and all remaining clusters according to the linkage method [3]

- Repetition: Repeat steps 2-3 until all points are members of a single cluster [3]

- Tree Formation: Record each merge in a linkage matrix containing the indices of merged clusters, the distance at which they merged, and the size of the new cluster [3]

The following diagram illustrates the dendrogram interpretation process:

Color Scale Selection Protocol

The choice of color scale significantly impacts heatmap interpretability. For scientific visualization, two primary color scale types are recommended [5]:

Sequential scales use blended progression, typically of a single hue, from least to most opaque shades, representing low to high values. These are ideal for data with a natural progression from low to high, such as raw TPM values (all non-negative) in gene expression analysis [5].

Diverging scales show color progression in two directions from a neutral central color, gradually intensifying different hues toward both ends. These are appropriate when a reference value exists in the middle of the data range (such as zero or an average value), such as when displaying standardized TPM values that include both up-regulated and down-regulated genes [5].

Critical considerations for color scale selection include:

- Avoiding rainbow scales which create misperception of data magnitude and lack consistent direction [5]

- Ensuring color-blind-friendly combinations (blue & orange, blue & red, blue & brown) [5]

- Maintaining sufficient contrast (minimum 3:1 ratio) for accessibility [6]

- Limiting color palette complexity to maintain interpretability [5]

Advanced Applications in Research and Drug Development

Case Study: LINCS L1000 Dataset Analysis

A compelling application of cluster heatmaps in drug development involves the LINCS L1000 project, which profiles gene expression signatures of cell lines perturbed by chemical or genetic agents [1]. In this case study, researchers analyzed gene expression signatures of 297 bioactive chemical compounds to identify clusters with shared biological activities.

The experimental protocol involved:

- Data Acquisition: Downloading LINCS L1000 gene expression data from Gene Expression Omnibus [1]

- Signature Calculation: Computing differential expression signatures for each experiment using the characteristic direction method [1]

- Quality Assessment: Using average cosine distance between replicates to represent bioactivity strength [1]

- Data Filtering: Selecting named compounds tested in at least 10 experiments with average ACD < 0.9 [1]

- Standardization: Applying z-score standardization along the column dimension [1]

- Clustering: Implementing average linkage clustering with row cosine distance and column correlation distance [1]

This analysis revealed seventeen biologically meaningful clusters based on dendrogram structure and heatmap expression patterns. Notably, researchers identified a previously unreported cluster consisting mostly of naturally occurring compounds with shared broad anticancer, anti-inflammatory, and antioxidant activities [1]. This discovery exemplifies how cluster heatmap analysis can uncover convergent biological effects through divergent mechanisms, particularly valuable for drug repurposing and understanding polypharmacology.

Interactive Tools for Complex Analysis

For large-scale studies, static cluster heatmaps present limitations in exploring complex dendrograms. Tools like DendroX have been developed to enable interactive visualization where researchers can divide dendrograms at any level and in any number of clusters [1]. This capability is particularly valuable when clusters locate at different levels in the dendrogram, requiring multiple cuts at different heights.

DendroX implementation features include:

- Web-based Interface: Front-end only app processing data within the browser without server communication [1]

- Dynamic Cluster Selection: Ability to select multiple clusters at different levels with distinct coloring [1]

- Cross-Platform Compatibility: Helper functions in R and Python to extract linkage matrices from cluster heatmap objects [1]

- Scalability: Testing on dendrograms with tens of thousands of leaf nodes [1]

This interactive approach solves the problem of matching visually and computationally determined clusters in complex heatmaps, enabling researchers to navigate different parts of a dendrogram and extract cluster labels for functional enrichment analysis [1].

Table 4: Essential Computational Tools for Heatmap and Dendrogram Analysis

| Tool/Resource | Function | Application Context |

|---|---|---|

| pheatmap R Package | Draws pretty heatmaps with extensive customization | Publication-quality static heatmaps; provides comprehensive features [2] |

| ComplexHeatmap Bioconductor | Arranges and annotates complex heatmaps | Genomic data analysis; integrating multiple data sources [7] |

| heatmaply R Package | Generates interactive heatmaps | Exploratory data analysis; mouse-over inspection of values [2] |

| dendextend R Package | Customizes dendrogram appearance | Enhanced visualization; coloring branches by cluster [8] |

| DendroX Web App | Interactive cluster selection | Multi-level cluster identification in complex dendrograms [1] |

| RColorBrewer Palette | Provides color-blind friendly palettes | Accessible visualization; sequential and diverging color schemes [7] |

| Seaborn Python Library | Generates cluster heatmaps | Python-based data analysis; integration with pandas dataframes [1] |

Table 5: Analytical Methods and Metrics for Cluster Validation

| Method | Purpose | Interpretation |

|---|---|---|

| Cophenetic Correlation | Measures how well dendrogram preserves original distances | Values closer to 1.0 indicate better representation [3] |

| Silhouette Score | Evaluates cluster cohesion and separation | Values range from -1 (poor) to +1 (excellent) [3] |

| Inconsistency Coefficient | Identifies natural cluster boundaries | Large jumps suggest optimal cut points [3] |

| Bootstrap Resampling (pvclust) | Assesses cluster stability | Provides p-values for branches via resampling [1] |

| Colless/Sackin Index | Quantifies tree imbalance | Flags potential data issues or meaningful asymmetry [3] |

These computational resources and validation metrics provide researchers with a comprehensive toolkit for generating, customizing, and validating cluster heatmaps across various research contexts, from exploratory analysis to publication-ready visualizations.

In the realm of data analysis, particularly within biological sciences and drug development, researchers increasingly face the challenge of interpreting high-dimensional datasets where patterns remain hidden in rows and columns of numbers. The synergistic combination of heatmaps with dendrograms has emerged as a powerful solution to this problem, transforming raw data into intelligible visual patterns that reveal underlying structures and relationships. This integrated approach leverages the visual intensity of color gradients with the hierarchical grouping capabilities of clustering algorithms, creating a graphical representation that facilitates deeper insight into complex systems [4] [9].

The fundamental power of this combined visualization technique lies in its ability to simultaneously present two types of information: numerical values through color intensity and structural relationships through hierarchical clustering. When applied to research domains such as genomics or drug development, this approach enables scientists to quickly identify patterns of similarity and difference across multiple dimensions—for example, seeing which genes express similarly across patient groups or which compound structures cluster with known active agents [9]. This paper explores the technical implementation, methodological considerations, and practical applications of these combined visualization techniques within the broader context of dendrogram and clustering research, with specific attention to the needs of researchers and drug development professionals.

Theoretical Foundations

Heatmaps: Visualizing Data Intensity

A heatmap is a two-dimensional visualization that uses color to represent numerical values, creating an intuitive graphical representation of data matrices. The core components of a standard heatmap include:

- Color Gradients: Values are mapped to colors using either sequential palettes (for unidirectional data) or diverging palettes (for data with meaningful midpoints) [10]

- Grid Structure: Data points are arranged in a rectangular grid where rows typically represent observations (e.g., genes, patients) and columns represent variables or features [11]

- Intensity Encoding: Color intensity corresponds to the magnitude of the underlying data value, allowing rapid identification of "hot" and "cold" areas [10]

Heatmaps serve as particularly effective tools for visualizing high-dimensional data by transforming numerical tables into color-coded patterns that the human visual system can process more efficiently than raw numbers [9]. The effectiveness of a heatmap depends heavily on appropriate color selection, with sequential scales moving from lighter to darker shades representing continuously increasing values, and diverging palettes using contrasting hues to represent values above and below a critical point (such as zero) [10].

Dendrograms: Revealing Hierarchical Structure

Dendrograms are tree-like diagrams that illustrate the arrangement of clusters produced by hierarchical clustering algorithms. Key aspects include:

- Leaf Nodes: Represent individual data points or observations

- Branch Lengths: Correspond to the degree of similarity between clusters, with shorter branches indicating higher similarity [4]

- Cluster Formation: Groups are formed by progressively merging the most similar pairs of data points or clusters

The clustering process typically employs distance metrics (such as Euclidean or Manhattan distance) to quantify similarity and linkage criteria (such as complete, single, or average linkage) to determine how distances between clusters are calculated. The resulting dendrogram provides a visual representation of the hierarchical relationships within the data, revealing natural groupings that may not be apparent from the raw data alone.

The Synergistic Integration

When heatmaps and dendrograms are combined, they create a comprehensive analytical tool that exceeds the capabilities of either component alone. The integration works through:

- Dual Representation: The heatmap shows actual data values through color, while the dendrogram reveals structural relationships through branching patterns [4]

- Coordinated Sorting: Both rows and columns of the heatmap are reordered according to the hierarchical clustering results, grouping similar observations and variables together [9]

- Pattern Amplification: The combination allows researchers to simultaneously see data values and cluster memberships, making it easier to identify correlations and anti-correlations across variables [4] [9]

This synergistic relationship is particularly valuable in research contexts because it enables exploratory data analysis without requiring a priori hypotheses about group structures, while also providing a means to validate expected patterns and discover unexpected relationships.

Methodological Implementation

Data Preparation and Standardization

Effective implementation of heatmaps with dendrograms requires careful data preprocessing to ensure meaningful results. Key preparation steps include:

- Data Normalization: Converting raw measurements to comparable scales through Z-score transformation, log transformation, or other normalization techniques to account for different measurement units or scales [9]

- Missing Value Handling: Implementing appropriate strategies for dealing with incomplete data points, which could include imputation or exclusion

- Data Structuring: Organizing data into a matrix format where rows represent observations and columns represent features [11]

Table 1: Data Standardization Methods for Heatmap Visualization

| Method | Use Case | Formula | Impact on Visualization |

|---|---|---|---|

| Z-score Standardization | Variables with different units | ( z = \frac{x - \mu}{\sigma} ) | Centers data around mean with unit variance; enables comparison across variables |

| Log Transformation | Skewed data distributions | ( x' = \log(x) ) | Reduces impact of extreme values; improves color distribution |

| Min-Max Scaling | Preserving original distribution | ( x' = \frac{x - \min(x)}{\max(x) - \min(x)} ) | Scales data to fixed range (e.g., 0-1); maintains shape of original distribution |

| Unit Vector Transformation | Direction-focused analysis | ( x' = \frac{x}{|x|} ) | Normalizes samples to unit norm; emphasizes pattern direction over magnitude |

For research applications, normalization is particularly critical when analyzing data from multiple sources or with inherently different scales, such as gene expression levels across different experimental conditions [9]. Without proper standardization, the resulting visualizations may emphasize technical artifacts rather than biological patterns.

Clustering Methodologies

Hierarchical clustering forms the computational foundation for dendrogram generation. The process involves:

- Distance Matrix Calculation: Computing pairwise distances between all observations using an appropriate distance metric

- Cluster Formation: Iteratively merging the closest pairs of points or clusters based on the selected linkage criterion

- Tree Construction: Building the dendrogram to represent the sequence of merging operations and similarity levels at which merges occur

Table 2: Clustering Algorithm Components and Their Applications

| Component | Options | Research Context | Advantages | Limitations |

|---|---|---|---|---|

| Distance Metric | Euclidean, Manhattan, Correlation, Cosine | Euclidean: General use; Correlation: Pattern similarity | Euclidean: Geometrically intuitive; Correlation: Shape-focused | Euclidean: Scale-sensitive; Correlation: Magnitude insensitive |

| Linkage Criterion | Complete, Average, Single, Ward's | Ward's: Compact spherical clusters; Average: Balanced approach | Ward's: Minimizes variance; Complete: Compact clusters | Single: Chain effect; Complete: Outlier sensitivity |

| Implementation | Agglomerative, Divisive | Agglomerative: Most common; Divisive: Top-down approach | Agglomerative: Guaranteed results; Divisive: Global structure consideration | Agglomerative: Computational intensity; Divisive: Implementation complexity |

The choice of clustering parameters significantly impacts the resulting visualization and should be guided by the research question and data characteristics. For instance, in gene expression analysis, correlation-based distance metrics often prove more meaningful than Euclidean distance because they cluster genes with similar expression patterns across conditions regardless of absolute magnitude [9].

Visualization Techniques

Creating effective heatmap-dendrogram combinations requires attention to several visualization principles:

- Color Palette Selection: Choosing appropriate sequential or diverging color schemes that accurately represent the data while considering color vision deficiencies [10]

- Layout Integration: Positioning dendrograms along the top and/or left sides of the heatmap to clearly associate branches with corresponding rows and columns [4]

- Interactive Features: Implementing zooming, filtering, and tooltips to facilitate exploration of large datasets

Recent advancements in visualization tools have introduced enhanced features such as:

- Group Separation: Visually distinguishing clusters identified by the dendrogram through spacing or borders, improving clarity and interpretation [4]

- Annotation Bars: Adding color-coded annotations alongside the heatmap to represent categorical variables (e.g., patient groups, experimental conditions) that may correlate with observed patterns [4]

- Circular Layouts: Arranging the heatmap in a circular format to efficiently utilize space and emphasize patterns in large datasets [9]

Experimental Protocols and Workflows

Standard Protocol for Heatmap with Dendrogram Creation

The following workflow diagram illustrates the end-to-end process for creating a clustered heatmap visualization:

Figure 1: Workflow for creating heatmaps with dendrograms, showing the sequential process from raw data to final interpretation.

The detailed methodology for each step includes:

Data Preprocessing: Load dataset and apply appropriate normalization. For gene expression data, this typically involves log2 transformation of counts followed by Z-score standardization across samples [9].

Distance Matrix Calculation: Compute pairwise distances using a selected metric. The choice of distance metric should reflect the biological question—Euclidean distance for magnitude differences, correlation distance for pattern similarity.

Hierarchical Clustering: Apply clustering algorithm using the computed distance matrix and a selected linkage method. Ward's linkage often produces more balanced clusters for biological data.

Dendrogram Construction: Generate the tree structure from clustering results, determining cut points for cluster identification.

Heatmap Rendering: Map normalized values to colors using an appropriate palette, with row and column ordering determined by the dendrogram structure.

Visual Integration: Combine heatmap and dendrograms in a single plot, adding annotations and labels for interpretation.

Case Study: Healthcare Implementation Research

A practical application of matrix heat mapping in implementation science demonstrates the real-world utility of this approach. Researchers used combined visualization to analyze qualitative data from 66 stakeholder interviews across nine healthcare organizations implementing universal tumor screening programs [12]. The following diagram illustrates their analytical workflow:

Figure 2: Analytical workflow for matrix heat mapping in implementation science research.

This case study exemplifies how the heatmap-dendrogram approach can be adapted for qualitative data in implementation science. Researchers created visual representations of protocols to compare processes and score optimization components, then used color-coded matrices to systematically summarize and consolidate contextual data using the Consolidated Framework for Implementation Research (CFIR) [12]. The combined scores were visualized in a final data matrix heat map that revealed patterns of contextual factors across optimized programs, non-optimized programs, and organizations with no program.

The methodological approach included:

Process Mapping: Creating visual diagrams of each organization's protocol to identify gaps and inefficiencies, which helped define five process optimization components used to quantify program implementation on a scale from 0 (no program) to 5 (optimized) [12].

Data Matrix Heat Mapping: Using color-coded matrices to systematically represent qualitative data, enabling consolidation of vast amounts of information from multiple stakeholders and identification of patterns across programs [12].

This combined approach provided a systematic and transparent method for understanding complex organizational heterogeneity prior to formal analysis, introducing a novel stepwise approach to data consolidation and factor selection in implementation science [12].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools and Packages for Heatmap Visualization

| Tool/Package | Application Context | Key Features | Implementation Considerations |

|---|---|---|---|

| Origin 2025b | General scientific data analysis | Integrated heatmap with dendrogram; Grouping visualization; Color bar annotations | Directly accessible from plot menu; Enhanced cluster separation features [4] |

| R circlize package | Genomics, large dataset visualization | Circular layout; Flexible annotation systems; Hierarchical clustering integration | Efficient for large datasets; Steep learning curve; High customization [9] |

| Matrix Heat Mapping | Qualitative implementation research | CFIR framework integration; Cross-organization comparison; Process optimization scoring | Requires manual coding; Effective for qualitative data consolidation [12] |

| Clustered Heatmaps | Biological sciences, gene expression | Row/column clustering; Multiple distance metrics; Annotation tracks | Computational intensity increases with data size; Requires normalization [9] |

Advanced Applications in Research

Circular Heatmaps for Genomic Data

Circular heatmaps represent an advanced variation that provides unique advantages for certain research applications. The circular layout efficiently utilizes space and allows visualization of larger datasets while maintaining the hierarchical relationships shown through dendrograms [9]. In cancer research, circular heatmaps have been employed to show the expression of genes and proteins across patient samples, with the circular arrangement helping researchers quickly identify the strongest or most relevant results [9].

The implementation of circular heatmaps typically utilizes specialized packages such as the circlize package in R, which provides a framework to circularize multiple user-defined graphics functions for data visualization [9]. This approach has proven particularly valuable when studying similarities in gene expression across individuals, where it helps biologists quickly grasp the level of gene activity across patients through color coding while simultaneously identifying genes with similar activity patterns through clustering [9].

Matrix Heat Mapping in Implementation Science

The adaptation of heatmap principles for qualitative data analysis in implementation science represents another advanced application. In the IMPULSS study, researchers developed a "data matrix heat mapping" approach that combined traditional qualitative analysis with color-coded visualizations to understand factors affecting implementation of universal tumor screening programs across healthcare systems [12].

This methodology enabled researchers to:

- Consolidate vast qualitative data from 66 stakeholder interviews into visually accessible formats

- Identify implementation patterns across optimized and non-optimized programs

- Select relevant contextual factors for further analysis through comparative methods

- Reconcile stakeholder inconsistencies by visually representing protocol variations [12]

The success of this approach in implementation science suggests potential applications in other research domains where researchers must synthesize complex qualitative or mixed-methods data alongside quantitative measurements.

Technical Considerations and Best Practices

Computational Resource Management

The implementation of heatmaps with dendrograms, particularly for large datasets, requires careful attention to computational resources. As noted by NCI researchers, "rendering a circular layout with hierarchical clustering can be a slow and memory-intensive task for most computers" [9]. Key considerations include:

- Data Size Assessment: Evaluating whether local computational resources are adequate or if cloud computing solutions (such as NIH's Biowulf) should be utilized for large datasets [9]

- Algorithm Efficiency: Selecting appropriate algorithms that balance computational efficiency with analytical needs

- Progressive Visualization: Implementing interactive features that enable working with large datasets without requiring full re-rendering

For particularly large datasets, such as those encountered in genomics research, dimension reduction techniques prior to heatmap visualization may be necessary to ensure computational feasibility while maintaining biological relevance.

Color Selection and Accessibility

The effectiveness of heatmap visualization depends critically on appropriate color selection. Best practices include:

- Sequential vs. Diverging Palettes: Using sequential palettes for data that progresses from low to high values, and diverging palettes for data with a critical midpoint (such as zero) [10]

- Color Contrast Compliance: Ensuring sufficient contrast between adjacent colors and between text labels and their backgrounds, following WCAG guidelines of at least 4.5:1 for normal text and 3:1 for large text [13] [14]

- Color Vision Deficiency Considerations: Selecting palettes that remain distinguishable for individuals with various forms of color blindness

- Legend Inclusion: Always providing a clear legend that shows how colors map to data values, as "color on its own has no inherent association with value" [11]

Accessibility considerations are particularly important in research contexts where findings may need to be interpreted by diverse teams or included in publications with specific accessibility requirements.

Validation and Interpretation

The interpretive nature of cluster analysis necessitates careful validation approaches:

- Cluster Stability Assessment: Using techniques such as bootstrapping to evaluate the robustness of identified clusters

- Multiple Metric Evaluation: Comparing results across different distance metrics and linkage methods to ensure patterns are not artifacts of a particular algorithmic choice

- Biological/Contextual Validation: Grounding interpretations in domain knowledge rather than relying solely on statistical patterns

- Annotation Integration: Incorporating relevant metadata through color bars or other annotations to facilitate pattern interpretation [4]

These validation approaches help ensure that the patterns revealed through heatmap-dendrogram visualizations represent meaningful biological or experimental phenomena rather than computational artifacts.

The synergistic combination of heatmaps with dendrograms represents a powerful paradigm for exploratory data analysis across multiple research domains, from genomics to implementation science. This integrated approach enables researchers to transform complex, high-dimensional datasets into intelligible visual patterns that reveal underlying structures and relationships. By leveraging both color intensity and hierarchical grouping, these visualizations facilitate pattern recognition that might remain hidden in traditional numerical representations.

The continued evolution of these techniques—including circular layouts, enhanced grouping features, and applications to qualitative data—promises to further expand their utility in research contexts. However, effective implementation requires careful attention to data preprocessing, computational resources, color accessibility, and validation methodologies. When applied appropriately, heatmaps with dendrograms serve as invaluable tools in the researcher's arsenal, enabling insights that drive scientific discovery and innovation in fields ranging from basic biology to drug development and healthcare implementation.

Cluster heatmaps with dendrograms are powerful graphical representations that combine a color-based heatmap with hierarchical clustering, enabling researchers to uncover patterns in complex biological data. The heatmap uses color gradients to display data intensity, while the dendrograms positioned along the top and/or side illustrate similarity and grouping of rows and columns based on statistical algorithms [4]. This visualization approach allows investigators to find patterns from large data matrices that would otherwise be difficult to detect, making it particularly valuable for analyzing gene expression measurements, patient stratification, and drug response signatures [15]. In contemporary biomedical research, these methods have become indispensable for translating raw molecular data into biologically meaningful insights, especially in the fields of transcriptomics, precision oncology, and personalized medicine [16] [17].

The fundamental strength of this approach lies in its ability to simultaneously visualize both the individual data points and the hierarchical clustering structure, enabling researchers to identify natural groupings in their data without prior assumptions about the number or composition of clusters. This unsupervised discovery process has proven particularly valuable for uncovering novel biological relationships that might not be apparent through hypothesis-driven analyses alone [1]. As the volume and complexity of biological data continue to grow, sophisticated clustering methodologies have evolved to address the challenges of analyzing high-dimensional datasets while providing intuitive visual interpretations of the results.

Methodological Approaches and Experimental Protocols

Gene Expression Clustering for Drug Response Signatures

Protocol: Gene Clustering to Identify Drug-Specific Survival Patterns

Data Acquisition and Preprocessing: Acquire RNA-seq data from pre-treatment patient samples. For the study cited, data from 10,237 patients across 33 cancer types from The Cancer Genome Atlas (TCGA) were used. The gene expression data (58,364 genes) were binarized using the StepMiner algorithm, which fits a step function to ordered expression values by testing multiple thresholds and selecting the one that minimizes the mean square error within high and low subsets [16].

Clustering Implementation: Apply co-occurrence clustering to the binarized gene expression data. This iterative bi-clustering method constructs a gene-gene graph based on chi-square pairwise association and uses the Louvain algorithm to identify clusters of genes that tend to be co-expressed across patient subsets. The algorithm recursively clusters genes based on expression patterns across various patient subsets in the dataset [16].

Survival Analysis Integration: For each identified gene cluster, perform survival analysis on patients treated with specific drugs. Stratify patients based on how many of the cluster's genes they express. To establish drug-specific effects, repeat the same survival test in patients who did not receive the drug, ensuring observed survival differences are specifically linked to the treatment rather than general cancer prognosis [16].

Biological Validation: Investigate clusters showing drug-specific survival differences using overrepresentation analysis to identify common features such as shared regulatory elements or transcription factors. Perform additional drug-specific survival analyses to verify drug-cluster-transcription factor target relationships [16].

Table 1: Cancer Cohorts and Analytical Scope from TCGA Study

| Cancer Type | TCGA Abbreviation | Patient Count | Gene Clusters Identified | Drugs Analyzed |

|---|---|---|---|---|

| Breast Invasive Carcinoma | BRCA | 1,069 | 165 | 15 |

| Lung Adenocarcinoma | LUAD | 500 | 98 | 8 |

| Glioblastoma Multiforme | GBM | 143 | 33 | 3 |

| Colon Adenocarcinoma | COAD | 446 | 156 | 6 |

| Brain Lower Grade Glioma | LGG | 498 | 63 | 5 |

| Liver Hepatocellular Carcinoma | LIHC | 368 | 52 | 1 |

Genetic Liability Profiling for Patient Stratification

Protocol: CASTom-iGEx Framework for Patient Stratification

Gene Expression Imputation: Predict tissue-specific gene expression profiles from individual-level genotype data using biologically meaningful sets of common variants. The PriLer method (a modified elastic-net approach) can be trained on reference datasets from GTEx and the CommonMind Consortium across multiple tissues (34 tissues in the cited study) [17].

T-Score Transformation: Convert patient-level imputed gene expression values to T-scores for each gene and tissue. This quantifies the deviation of gene expression in each patient relative to a reference population of healthy individuals, ensuring similar distribution of expression values across samples for each gene [17].

Disease Association Weighting: Weight the contribution of each gene in clustering according to its relevance for the disease phenotype through tissue-specific transcriptome-wide association studies (TWAS). Weight individual-level gene T-scores by the disease gene Z-statistics to derive weighted expression values incorporating disease association strength [17].

Unsupervised Clustering: Apply Leiden clustering for community detection to partition patients into distinct subgroups using empirically optimized hyperparameters. Perform clustering for each tissue separately while correcting for ancestry contribution and other covariates to minimize confounding effects [17].

Validation and Generalization: Project imputed gene-level score profiles from independent cohorts onto the discovered clustering structure to evaluate reproducibility. Compare the resulting stratification against traditional polygenic risk score (PRS) based groupings to assess added value [17].

Diagram 1: CASTom-iGEx Workflow for Patient Stratification. This diagram illustrates the sequential process from genetic data to clinically validated patient subgroups, highlighting key analytical steps including imputation, transformation, and clustering.

Key Applications and Findings

Transcriptomic Patterns in Drug Response

The application of gene clustering to transcriptomic data has revealed specific patterns related to patient drug response. In one comprehensive analysis, gene clusters whose expression correlated with drug-specific survival were identified and subsequently investigated for biological meaning. This approach implicated specific transcription factors in treatment response mechanisms: stem cell-related transcription factors HOXB4 and SALL4 were associated with poor response to temozolomide in brain cancers, while expression of SNRNP70 and its targets were implicated in cetuximab response across three different analyses [16]. Additionally, evidence suggested that cancer-related chromosomal structural changes may impact drug efficacy, providing potential mechanistic explanations for treatment variability.

The biological interpretation of these computationally derived gene clusters has proven particularly valuable for generating testable hypotheses about drug resistance mechanisms. By moving beyond mere pattern recognition to biological validation, researchers have transformed clustering results into insights about specific molecular pathways affecting therapeutic outcomes. This approach exemplifies how unsupervised learning methods can generate biologically meaningful insights when integrated with appropriate validation frameworks and domain expertise.

Patient Stratification in Complex Diseases

The CASTom-iGEx approach has demonstrated significant utility in stratifying patients with complex diseases based on the aggregated impact of their genetic risk factor profiles on tissue-specific gene expression. When applied to coronary artery disease (CAD), this methodology identified between 3 and 10 distinct patient subgroups across different tissues that showed consistent patterns across independent cohorts [17]. These subgroups exhibited differences in intermediate phenotypes and clinical outcome parameters, suggesting they represent biologically distinct forms of the disease.

Table 2: Comparison of Stratification Approaches in CAD Analysis

| Feature | CASTom-iGEx Approach | Traditional PRS Approach |

|---|---|---|

| Basis of Stratification | Aggregated impact on tissue-specific gene expression | Summed effect of risk alleles |

| Number of Groups | 3-10 (tissue-dependent) | 4 (quartile-based) |

| Biological Interpretation | Directly interpretable via gene expression patterns | Agnostic of biological mechanisms |

| Clinical Relevance | Distinguished by endophenotypes and outcomes | Mainly distinguishes risk levels |

| Reproducibility | High across independent cohorts | Variable depending on population |

In contrast to PRS-based stratification, which primarily categorizes patients by overall genetic risk burden, the CASTom-iGEx approach reveals how complex genetic liabilities converge onto distinct disease-relevant biological processes. This supports the concept of different patient "biotypes" characterized by partially distinct pathomechanisms, with important implications for developing targeted treatment strategies [17].

Essential Research Tools and Implementation

Software and Computational Tools

DendroX for Interactive Cluster Selection: DendroX is a web application that provides interactive visualization of dendrograms, enabling researchers to divide dendrograms at any level and select multiple clusters across different branches [1]. The tool solves the problem of matching visually and computationally determined clusters in a cluster heatmap and helps users navigate among different parts of a dendrogram. It accepts input generated from R or Python clustering functions and provides helper functions to extract linkage matrices from cluster heatmap objects in these environments [1].

Origin 2025b with Enhanced Heatmap Features: Origin 2025b now includes built-in heatmap with dendrogram capabilities directly accessible from the Plot menu, incorporating features such as support for heatmap with grouping and color bar options for representing categorical information alongside the heatmap [4].

NCSS for Statistical Heatmap Generation: NCSS software provides comprehensive clustered heat map (double dendrogram) capabilities with eight possible hierarchical clustering algorithms, allowing different methods for rows and columns and enabling investigators to find patterns in large data matrices [15].

Table 3: Research Reagent Solutions for Clustering Analysis

| Resource/Tool | Type | Primary Function | Implementation |

|---|---|---|---|

| TCGA Database | Data Resource | Provides pre-treatment gene expression and clinical data | Access via Genomic Data Commons (GDC) API and Data Transfer Tool |

| GTEx Reference | Data Resource | Tissue-specific gene expression reference for imputation | Download from GTEx Portal for training prediction models |

| Co-occurrence Clustering | Algorithm | Identifies co-expressed gene clusters in binarized data | Implemented in Python based on chi-square association and Louvain algorithm |

| PriLer Method | Algorithm | Predicts gene expression from genotype data | Modified elastic-net approach for tissue-specific imputation |

| DendroX | Software | Interactive dendrogram visualization and cluster selection | Web app using D3 library for visualization; R/Python helper functions |

Diagram 2: Research Tool Ecosystem for Clustering Analysis. This diagram categorizes essential resources and tools for conducting comprehensive clustering analyses, from data acquisition through visualization.

Clustering methodologies applied to biological data have evolved from simple pattern recognition tools to sophisticated frameworks capable of stratifying patients and predicting therapeutic responses. The integration of heatmap visualization with dendrogram representation provides an intuitive yet powerful approach to interpreting high-dimensional biological data, enabling researchers to translate complex genetic and transcriptomic profiles into clinically actionable insights [4] [16] [17]. As these methods continue to develop with enhanced interactive capabilities and more biologically informed algorithms, they promise to play an increasingly important role in personalized medicine and drug development pipelines.

The demonstrated applications in gene expression analysis and patient stratification highlight how these computational approaches can bridge the gap between genetic associations and biological mechanisms. By enabling unbiased discovery of patient subgroups with distinct pathophysiological characteristics and treatment responses, clustering methodologies provide a foundation for developing more targeted therapeutic strategies and advancing precision medicine. Future developments will likely focus on integrating multiple data types, improving computational efficiency for increasingly large datasets, and enhancing visualization capabilities for more intuitive interpretation of complex biological patterns.

Dendrograms, or tree-like diagrams, serve as fundamental tools for visualizing hierarchical relationships and clustering results across various scientific disciplines, including computational biology and drug development. This technical guide provides an in-depth examination of dendrogram structures, with a specific focus on the critical interpretation of branch lengths and node heights. These elements are not merely visual components but quantitative representations of dissimilarity between data clusters. Within the broader context of heatmap research, dendrograms provide the structural framework that organizes rows and columns, revealing patterns and relationships that might otherwise remain hidden in complex datasets. For researchers and scientists, mastering the interpretation of these features is essential for accurate cluster analysis, valid biological conclusions, and informed decision-making in fields like drug discovery and patient stratification.

A dendrogram is a tree-like diagram that visualizes the results of hierarchical clustering, an unsupervised learning method that groups similar data points based on their characteristics [3]. Unlike flat clustering methods, hierarchical clustering creates a nested structure of clusters, providing insights not only into which data points belong together but also how close or far apart different groups are in terms of similarity [3]. This visualization is particularly valuable in fields where understanding nested relationships and varying levels of granularity in data is essential, such as in exploratory data analysis or when dealing with complex datasets that don't fit neatly into a fixed number of clusters [3].

In the context of heatmap research, dendrograms are frequently integrated as adjacent tree-like structures that provide a visual summary of the relationships within the data [18]. This combination, known as a clustered heat map, allows researchers to simultaneously observe data values (represented as colors in the heatmap) and the hierarchical clustering of both rows and columns (represented by the dendrograms) [18]. The construction of these integrated visualizations involves organizing data into a matrix format, normalizing or standardizing values, choosing appropriate distance metrics, applying hierarchical clustering, and finally visualizing the matrix as a heat map with integrated dendrograms [18].

Mathematical Foundations

The structural interpretation of dendrograms is deeply rooted in mathematical concepts of distance and linkage. The choice of both distance metric and linkage criterion fundamentally shapes the dendrogram's architecture and consequently influences biological interpretation.

Distance Metrics

Distance metrics quantify the dissimilarity between individual data points, forming the foundation upon which clusters are built [3].

Table 1: Common Distance Metrics in Hierarchical Clustering

| Metric Name | Mathematical Formula | Typical Use Cases |

|---|---|---|

| Euclidean Distance | d(x,y) = √∑(xᵢ - yᵢ)² | Continuous, normally distributed data; sensitive to scale [3]. |

| Manhattan Distance | d(x,y) = ∑∣xᵢ − yᵢ∣ | Grid-like or high-dimensional sparse data (e.g., text features) [3]. |

| Cosine Similarity | cos(θ) = x⋅y / (∥x∥∥y∥) | Text or document clustering where magnitude is irrelevant [3]. |

Linkage Criteria

Linkage criteria determine how the distance between clusters (sets of points) is calculated once individual point distances are known [3]. This choice directly affects the dendrogram's branching pattern.

Table 2: Common Linkage Criteria and Their Effects

| Linkage Method | Mathematical Definition | Effect on Cluster Formation |

|---|---|---|

| Single Linkage | d(A,B) = min d(a,b) | Promotes "chaining," can handle non-spherical shapes but is sensitive to noise [3]. |

| Complete Linkage | d(A,B) = max d(a,b) | Produces compact, spherical clusters; sensitive to outliers [3]. |

| Average Linkage | d(A,B) = (1/∣A∣∣B∣) ∑∑ d(a,b) | A balanced approach, less prone to extremes than single or complete [3]. |

| Ward's Method | d(A,B) = √[(∣A∣∣B∣ / (∣A∣+∣B∣)) ∥μA−μB∥²] | Minimizes within-cluster variance; often yields interpretable dendrograms [3]. |

Interpreting Dendrogram Structures

Core Elements and Terminology

A dendrogram consists of several key elements that must be understood for accurate interpretation [19]:

- Leaves (Terminal Nodes): Represent individual data points at the bottom of the tree [3] [19].

- Root Node: The topmost node representing the entire dataset where all branches converge [19].

- Branches: Lines connecting nodes; their vertical length indicates the dissimilarity between connected clusters [3] [19].

- Internal Nodes: Represent points where clusters merge, with height indicating the dissimilarity at which the merge occurs [19].

The Significance of Branch Lengths and Node Heights

The vertical axis in a dendrogram represents the distance or dissimilarity at which clusters merge [3]. This is the most critical dimension for interpretation:

- Low Merge Height = High Similarity: Clusters that merge at lower heights are more similar to each other [3]. The early grouping of data points indicates they share characteristics that distinguish them as a cohesive unit.

- High Merge Height = Low Similarity: Clusters that only merge near the top of the dendrogram are more distinct from each other [3]. The high dissimilarity threshold required for their merger underscores their fundamental differences.

The horizontal axis in a dendrogram primarily arranges the clusters for clear visualization and generally carries no quantitative meaning. The branching order can often be rotated without changing the hierarchical relationships, though the vertical distances remain fixed and meaningful [3].

Methodological Framework for Dendrogram Analysis

Standardized Workflow for Hierarchical Clustering

Implementing a consistent methodological approach ensures reproducible and interpretable dendrogram results, particularly when integrated with heatmap visualization as commonly practiced in genomic and biomedical research [18].

Determining the Number of Clusters

Unlike pre-specified clustering methods, hierarchical clustering doesn't require a predetermined number of clusters. The dendrogram itself provides visual guidance for this critical decision through the "cutting" approach [3]. Imagine drawing a horizontal line across the dendrogram at a chosen height—the number of vertical lines this imaginary line intersects indicates the number of clusters at that dissimilarity level [3]. Optimal cut points are often identified where large jumps in merge height occur, indicating natural separations between clusters [3].

Advanced Interpretation and Validation Techniques

Quantitative Validation Methods

While visual inspection of branch lengths provides initial insights, robust interpretation requires quantitative validation:

- Cophenetic Correlation Coefficient (CPCC): Measures how faithfully the dendrogram preserves the original pairwise distances between data points. Values closer to 1.0 indicate better representation.

- Inconsistency Coefficient: Quantifies the sharpness of a cluster merge by comparing its height with the average heights of previous merges. Large values suggest natural cluster boundaries [3].

- Silhouette Score: Evaluates cluster quality after cutting by measuring how similar each point is to its own cluster compared to other clusters [3].

Interpreting Balanced vs. Unbalanced Trees

The overall shape of a dendrogram provides immediate insights into data structure:

- Balanced Dendrograms: Feature relatively uniform branch lengths and symmetrical structure, suggesting homogeneous data with evenly distributed similarities [3].

- Unbalanced Dendrograms: Exhibit substantial variation in branch lengths, potentially indicating outliers, natural group divisions of different sizes, or skewed data distributions [3]. For example, a long, isolated branch might represent an anomaly that doesn't fit well with other points until much higher dissimilarity levels [3].

Dendrograms in Clustered Heatmap Research

Integration with Heatmap Visualization

In biomedical research, dendrograms are most frequently encountered alongside heatmaps in what are termed clustered heat maps (CHMs) [18]. This powerful combination enables simultaneous visualization of data values (through color in the heatmap) and hierarchical relationships (through the dendrogram structure) [18]. The dendrograms reorder the rows and columns of the heatmap based on similarity, grouping together genes with similar expression patterns or samples with similar profiles, thus revealing patterns that might not be apparent in the raw data [18].

Applications in Genomics and Drug Development

Clustered heatmaps with dendrograms have been instrumental in numerous biological breakthroughs:

- Gene Expression Studies: Identifying co-expressed gene clusters and molecular subtypes in cancers, enabling patient stratification for targeted therapies [20] [18].

- Metabolomics and Proteomics: Visualizing abundance patterns of metabolites or proteins across different conditions or disease states to identify potential diagnostic biomarkers [18].

- Pharmacogenomics: Understanding drug response patterns by clustering patients based on genomic profiles, facilitating personalized treatment approaches [18].

Essential Research Reagents and Computational Tools

The generation of dendrograms and clustered heatmaps requires both biological and computational "reagents." The table below details essential tools for conducting such analyses.

Table 3: Essential Research Reagents and Tools for Dendrogram and Heatmap Analysis

| Tool Category | Specific Examples | Function and Application |

|---|---|---|

| Programming Environments | R, Python | Primary platforms for statistical computing and implementation of clustering algorithms [18]. |

| R Packages for Heatmaps | heatmap3, pheatmap, ComplexHeatmap | Generate highly customizable heatmaps with dendrograms; enable statistical testing and advanced annotations [20] [18]. |

| Python Libraries | seaborn (clustermap), scipy (linkage) | Create clustered heatmaps and perform hierarchical clustering with dendrogram visualization [18]. |

| Interactive Platforms | Next-Generation Clustered Heat Maps (NG-CHMs) | Provide dynamic exploration (zooming, panning) of large datasets, surpassing limitations of static heatmaps [18]. |

| Validation Packages | pvclust (R) | Assess cluster robustness through bootstrap resampling and compute consensus trees with p-values [3]. |

Dendrograms provide an indispensable framework for interpreting hierarchical relationships in complex biological data. The interpretation of branch lengths and node heights—representing degrees of similarity and dissimilarity—is fundamental to extracting meaningful patterns from high-dimensional datasets. When integrated with heatmaps, these structures become particularly powerful tools for hypothesis generation and validation in genomics, metabolomics, and drug development research. As computational methods advance, particularly with the development of interactive visualization platforms, the capacity to explore and interpret these hierarchical relationships continues to grow, offering increasingly sophisticated insights into the complex biological systems underlying health and disease.

In the realm of scientific research, particularly in fields utilizing heatmaps and clustering such as genomics, transcriptomics, and drug development, color is far more than an aesthetic choice. It serves as a primary channel for encoding complex numerical data, enabling researchers to discern patterns, identify outliers, and draw meaningful conclusions from high-dimensional datasets. A heatmap is a graphical representation of data where individual values contained in a matrix are represented as colors, providing an intuitive overview of patterns and trends that would be difficult to detect in raw numerical data [2] [21]. When combined with dendrograms—tree-like diagrams that visualize the results of hierarchical clustering—color becomes an indispensable tool for interpreting cluster relationships and data structure [2] [3].

The effectiveness of these visualizations hinges on the thoughtful application of color theory. As highlighted in Rougier et al.'s "Ten Simple Rules for Better Figures," color can be your greatest ally or worst enemy in scientific visualization [22]. Proper use of color highlights critical information and streamlines the flow of complex information, while poor color choices can mislead, obscure, or even misrepresent the underlying data. This technical guide explores the principles of color gradient interpretation within the context of heatmap and dendrogram analysis, providing researchers with methodologies to enhance their data visualization practices.

Theoretical Foundations of Color Schemes

Color palettes in scientific visualization are generally categorized into three distinct types, each suited for representing different kinds of data relationships. Understanding these categories is fundamental to accurate data representation.

Qualitative Palettes

Qualitative palettes utilize distinct hues to represent categorical data with no inherent ordering. These palettes are ideal for differentiating between separate groups or classes, such as experimental conditions, tissue types, or patient cohorts. The key characteristic is the use of colors that are easily distinguishable from one another. For effective qualitative schemes, limit the number of distinct colors to approximately 10 to maintain visual clarity [23]. Example applications include distinguishing different cancer subtypes in a heatmap annotation or identifying various cellular lineages in single-cell RNA sequencing clusters.

Sequential Palettes

Sequential palettes employ a gradient from light to dark values of a single hue (or a progression through multiple hues) to represent ordered data that progresses from low to high values. The perceptual principle is straightforward: lighter colors typically represent lower values, while darker or more saturated colors represent higher values [23]. These palettes are indispensable for representing data intensity in heatmaps, such as gene expression levels (e.g., from low to high expression), protein abundance, or correlation coefficients. The continuity of the gradient allows the eye to easily track changes in magnitude across the visualization.

Diverging Palettes

Diverging palettes are characterized by two distinct hues that diverge from a shared neutral light color, making them ideal for highlighting deviations from a critical midpoint or reference value [22] [23]. Common applications include visualizing data that has a natural central point, such as z-scores (deviations from the mean), fold-changes in expression (upregulated vs. downregulated genes), or percentage changes from a baseline. In these palettes, the neutral central color (often white or light gray) represents the midpoint, while the two contrasting hues (e.g., blue and red) represent opposing deviations in the positive and negative directions.

Table 1: Color Palette Types and Their Applications in Scientific Visualization

| Palette Type | Data Type | Primary Application | Example Colors (Hex Codes) |

|---|---|---|---|

| Qualitative | Categorical, non-ordered groups | Differentiating distinct categories | #1F77B4, #FF7F0E, #2CA02C, #D62728 |

| Sequential | Ordered, continuous data (low to high) | Showing magnitude or intensity | #FFF7EC, #FEE8C8, #FDBB84, #E34A33, #B30000 |

| Diverging | Data with critical midpoint | Highlighting deviation from a reference | #1A9850, #66BD63, #F7F7F7, #F46D43, #D73027 |

Color Gradient Interpretation in Heatmaps and Clustering

Technical Implementation in Heatmap Generation

In computational tools like R's ComplexHeatmap package, color mapping for continuous values is typically handled by a color mapping function. The recommended approach is to use the circlize::colorRamp2() function, which linearly interpolates colors in specified intervals through a defined color space (default is LAB) [24]. This function requires two arguments: a vector of break values and a corresponding vector of colors. This method ensures robust mapping where colors correspond exactly to specific data values, even in the presence of outliers that might otherwise skew the color distribution.

For example, to create a diverging color scheme for a gene expression matrix:

This code ensures that values between -2 and 2 are linearly interpolated, with values beyond this range mapped to the extreme colors (blue for < -2, red for > 2) [24]. This approach maintains color consistency across multiple heatmaps, enabling direct comparison between different visualizations.

Color Space Considerations

The choice of color space for interpolation significantly affects the perceptual uniformity of the gradient. The LAB color space is often preferred over RGB for creating sequential palettes because it more closely aligns with human visual perception of color differences [24]. In practical terms, this means that equal steps in data value will correspond to more perceptually equal steps in color change, leading to more accurate interpretation of intensity gradients.

Experimental Protocols for Color Gradient Validation

Methodology for Color Gradient Selection and Testing

Robust heatmap visualization requires systematic validation of color gradient interpretability. The following protocol outlines a comprehensive approach for selecting and validating color schemes in clustering analyses.

Table 2: Essential Research Reagents and Computational Tools for Heatmap Visualization

| Tool/Reagent | Category | Primary Function | Example Applications |

|---|---|---|---|

| R ComplexHeatmap | Software Package | Advanced heatmap visualization | Creating publication-quality heatmaps with annotations [24] |

| ColorBrewer | Color Tool | Accessing tested color palettes | Selecting colorblind-safe sequential/diverging schemes [23] |

| Gower's Distance | Metric | Mixed-data distance calculation | Computing dissimilarity for clinical & genomic data [25] |

| Viridis Palette | Color Scheme | Perceptually uniform colormap | Ensuring accessible gradient interpretation [23] |

| Fastcluster Package | Algorithm | Efficient hierarchical clustering | Accelerating dendrogram generation for large datasets [20] |

Procedure:

- Data Preparation and Preprocessing: Begin with normalized data (e.g., Z-score normalized gene expression counts per million). For mixed-type data (continuous + categorical), select an appropriate dissimilarity measure such as Gower's distance to compute pairwise distances [25].

- Hierarchical Clustering: Perform clustering using a computationally efficient algorithm (e.g., from the

fastclusterpackage) with a linkage method appropriate to the data structure (e.g., Ward's method for compact clusters) [20] [3]. - Color Gradient Application: Apply candidate color gradients to the data matrix using the

colorRamp2()function in R, ensuring consistent mapping across all values [24]. - Accessibility Testing: Simulate color vision deficiencies using tools like Color Oracle or Coblis to verify that patterns remain distinguishable for all viewers [22] [23].

- Quantitative Validation: Calculate the cophenetic correlation coefficient to assess how well the dendrogram preserves the original pairwise distances between data points [3].

- Interpretation and Documentation: Record all color mapping parameters, including break points and color codes, to ensure reproducibility across the research team.

Workflow for Heatmap Creation and Color Interpretation

The following diagram illustrates the integrated process of creating a heatmap with appropriate color gradients, from data preparation to final interpretation.

Diagram 1: Heatmap color interpretation workflow.

Advanced Applications in Scientific Research

Integration with Dendrogram Interpretation

In clustered heatmaps, color gradients and dendrograms work synergistically to reveal data structure. The dendrogram represents hierarchical clustering relationships, while the color gradient encodes data values at the leaf level. When interpreting these visualizations, the vertical height at which branches merge indicates dissimilarity between clusters, with greater heights representing less similarity [3]. The color patterns within these clusters then reveal the biological or experimental significance of the groupings.

For example, in gene expression analysis, a distinct red region (high expression) clustered together with a specific patient group in the dendrogram may indicate a potential biomarker for that patient subtype. The combination of clustering patterns and color intensity allows researchers to form hypotheses about functional relationships and underlying biological mechanisms.

Case Study: Multi-Omics Data Integration

Advanced research increasingly involves integrating multiple data types (e.g., genomics, transcriptomics, clinical variables). The DESPOTA algorithm provides a method for non-horizontal dendrogram cutting, identifying the final partition from a hierarchy of solutions through permutation tests [25]. In such analyses, color gradients become essential for visualizing:

- Continuous molecular data (e.g., methylation levels, gene expression) using sequential palettes

- Categorical clinical variables (e.g., disease status, treatment response) using qualitative palettes

- Deviation from reference values (e.g., z-scores, fold-changes) using diverging palettes

The strategic use of color allows researchers to maintain visual coherence while representing diverse data types within a single analytical framework.

Best Practices and Accessibility Considerations

Color Selection Guidelines

Effective scientific visualization requires adherence to established color principles:

- Match Color Scheme to Data Type: Use qualitative palettes for categorical data, sequential for ordered data, and diverging for data with a critical midpoint [23].

- Ensure Perceptual Uniformity: For sequential data, use palettes that progress evenly from light to dark, avoiding rainbow color schemes which can create artificial boundaries [23].

- Limit Palette Complexity: Restrict the number of distinct colors to 5-7 for qualitative data to maintain visual clarity and avoid cognitive overload.

- Maintain Consistency: Use the same color for the same variable across multiple visualizations to facilitate comparison and interpretation.

Accessibility and Inclusivity

Approximately 8% of men and 0.5% of women experience color vision deficiency, making accessibility a critical consideration in scientific visualization [22]. Implement these practices to ensure inclusive design:

- Avoid Problematic Color Combinations: Do not rely solely on red-green or blue-yellow combinations to convey critical information, as these are the most commonly confused pairs [23].

- Incorporate Texture and Patterns: When possible, combine color with patterns, textures, or direct labeling to enable interpretation even when color perception is limited.

- Verify Contrast Ratios: Maintain a minimum contrast ratio of 4.5:1 between adjacent colors and between text and background elements [23].

- Test in Grayscale: Verify that all essential information remains discernible when the visualization is converted to grayscale, ensuring compatibility with black-and-white printing.

Color gradient interpretation represents a critical intersection of visual design and scientific analysis in heatmap and clustering research. By understanding the theoretical foundations of color schemes, implementing robust experimental protocols, and adhering to accessibility standards, researchers can create visualizations that accurately and effectively communicate complex data patterns. The strategic application of qualitative, sequential, and diverging palettes—tailored to specific data types and research questions—enhances the interpretability of heatmaps and dendrograms across diverse scientific domains. As visualization technologies continue to evolve, maintaining rigorous standards for color interpretation will remain essential for ensuring the validity, reproducibility, and accessibility of scientific findings.

Implementation Guide: Choosing Parameters and Building Cluster Heatmaps in R/Python

Within the realm of data science, particularly in fields like bioinformatics and drug development, cluster analysis is a fundamental technique for uncovering hidden patterns in high-dimensional data. The interpretation of resulting dendrograms and heatmaps is not absolute but is profoundly shaped by a critical algorithmic choice: the selection of a distance metric. This metric, which quantifies the similarity or dissimilarity between data points, serves as the foundation for clustering algorithms. The choice of whether to use Euclidean, Manhattan, or Correlation distance dictates how clusters form and, consequently, how scientists derive meaning from visualizations like heatmaps. A poor choice can lead to misleading patterns and incorrect biological or clinical conclusions [2] [18].

This guide provides an in-depth examination of these three core distance metrics, framing them within the context of clustering and heatmap generation for scientific research. It will equip researchers with the principles to select the most appropriate metric, ensuring their cluster analyses are both technically sound and biologically meaningful.

Theoretical Foundations of Distance Metrics

At its core, a distance metric is a function that defines a distance between each pair of elements in a set. In cluster analysis, these elements are typically data points (e.g., genes, samples, patients) represented as vectors in a multi-dimensional space. A proper distance metric must satisfy four mathematical properties: symmetry, non-negativity, the identity of indiscernibles, and the triangle inequality [26].

The choice of metric determines the "geometry" of the data space. Using a different metric is analogous to changing the definition of space itself, which will inevitably alter the relationships between points and the structure of the resulting clusters and dendrograms [27].

Euclidean Distance