Heatmaps in Gene Expression Analysis: A Comprehensive Guide from Exploration to Clinical Validation

This article provides a comprehensive overview of the critical role heatmaps play in exploratory gene expression analysis for researchers, scientists, and drug development professionals.

Heatmaps in Gene Expression Analysis: A Comprehensive Guide from Exploration to Clinical Validation

Abstract

This article provides a comprehensive overview of the critical role heatmaps play in exploratory gene expression analysis for researchers, scientists, and drug development professionals. It covers foundational principles of interpreting clustered heatmaps to uncover biological patterns, explores advanced methodological applications including single-cell RNA-Seq and spatial transcriptomics, addresses common troubleshooting challenges related to data complexity and technical variability, and discusses validation strategies to ensure analytical robustness. By integrating current methodologies, tools, and best practices, this guide serves as an essential resource for extracting meaningful biological insights from complex transcriptomic data across diverse research and clinical contexts.

Decoding Biological Patterns: How Heatmaps Transform Raw Gene Expression Data into Discoveries

In the field of functional genomics, heatmaps serve as an indispensable visual tool for the exploratory analysis of high-dimensional biological data. They transform complex numerical matrices of gene expression into intuitive, color-coded representations, enabling researchers to discern patterns, clusters, and biological signatures across thousands of genes and numerous samples simultaneously [1] [2]. This visualization is foundational within the broader thesis that heatmaps are not merely illustrative outputs but are critical for hypothesis generation in research areas ranging from disease subtype identification to drug response profiling. For scientists and drug development professionals, mastering the interpretation of a heatmap's core components—its rows, columns, and color scheme—is essential for accurately extracting biological meaning and guiding subsequent experimental validation [3] [2].

Core Components: The Structure of a Biological Heatmap

The architecture of a heatmap is defined by a grid system where the fundamental components correspond directly to the biological and experimental design. The standard construction is a direct mapping of data matrix dimensions to visual elements.

- Rows (Y-axis): In gene expression analysis, each row universally represents a single gene, transcript, or functionally related gene set [1] [2]. The order of rows can be alphabetical, sorted by a statistical measure (e.g., p-value), or—most informatively—reorganized by clustering algorithms based on expression similarity.

- Columns (X-axis): Each column typically represents a distinct biological sample or experimental condition [1]. These can be individual patients, different tissue types, time points in a series, or varied treatment groups (e.g., drug vs. vehicle control).

- Cells: The intersection of a specific row (gene) and column (sample) forms a cell. The color and color intensity within this cell encode the value of the main variable of interest, which in genomics is most often a measure of gene expression level [3] [1].

The following table summarizes the standard parameters and configurations for these components in a gene expression context.

Table 1: Standard Configuration of Heatmap Components in Gene Expression Analysis

| Component | Biological Entity | Common Data Type | Typical Sorting Methods |

|---|---|---|---|

| Rows | Genes, Transcripts, Gene Sets | Character (Gene ID), Numeric (Expression) | Hierarchical Clustering, Statistical Significance (p-value), Fold-Change Magnitude [1] [2] |

| Columns | Biological Samples, Experimental Conditions | Character (Sample ID), Factor (Condition) | Hierarchical Clustering, Experimental Group, Time Series Order [1] |

| Cells | Expression Value per Gene per Sample | Numeric (e.g., Log2 Fold Change, Z-score, TPM) | N/A (Value encoded by color) |

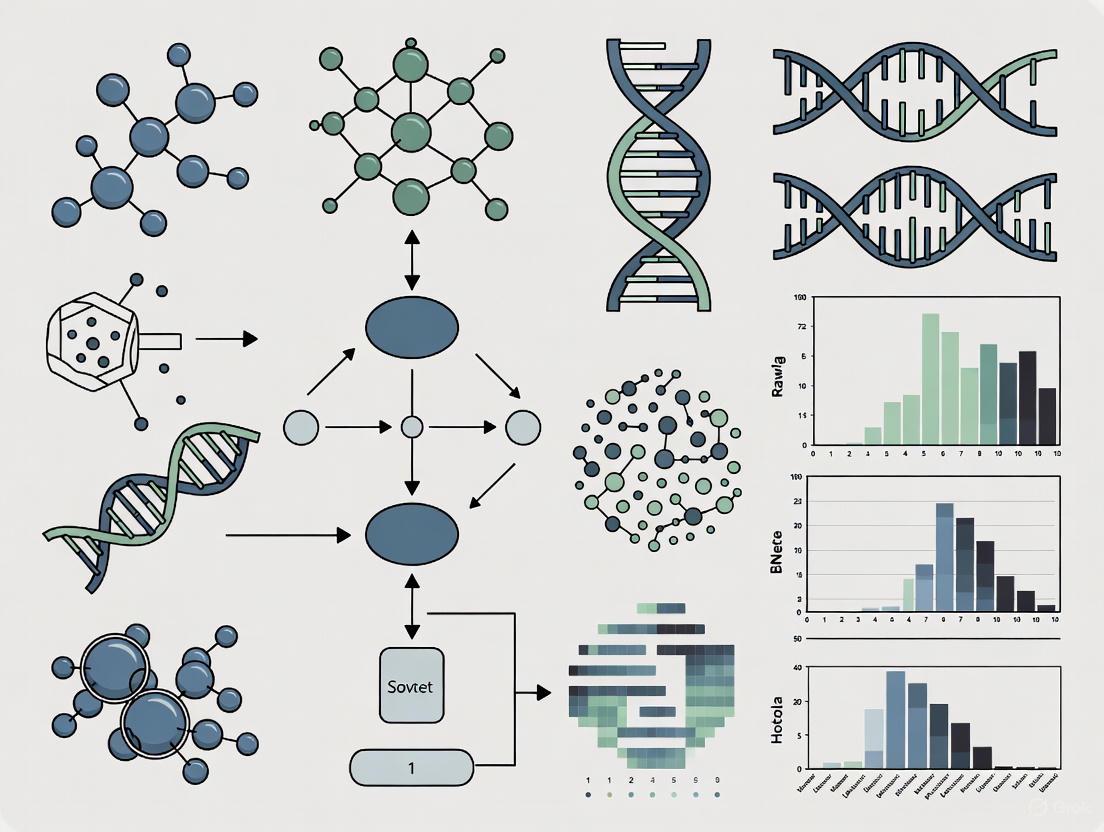

The relationship between data processing and final visualization is a logical workflow. The diagram below illustrates this process from raw data to an interpretable clustered heatmap.

Interpreting the Color Dimension

Color is the primary channel through which quantitative information is communicated in a heatmap. The choice of color palette is therefore not an aesthetic decision but an analytical one, directly impacting the accuracy of interpretation [4].

Types of Color Scales

Two primary types of color scales are used, each suited for different data interpretations:

- Sequential Scales: Use a single hue that progresses from light to dark saturation (e.g., light yellow to dark blue). They are ideal for representing absolute expression values (e.g., TPM, FPKM) or other metrics where all values are on a continuum from low to high [4].

- Diverging Scales: Use two contrasting hues that meet at a central, often neutral, color (e.g., blue-white-red). This scale is specifically designed for data that has a meaningful central point, such as zero in a log2 fold change matrix. It visually distinguishes up-regulated genes (one color) from down-regulated genes (the other color) relative to a control condition [4] [1].

Critical Best Practices for Color

- Avoid Rainbow Scales: The "rainbow" (jet) palette is perceptually non-linear, creates false boundaries, and is problematic for color-blind readers. It should be avoided in scientific communication [4].

- Color-Blind Friendly Palettes: Palettes using combinations like blue-orange or blue-red are generally safe for common forms of color vision deficiency [4].

- Always Include a Legend: A color key (legend) is non-optional, as it provides the definitive mapping between color and numerical value, allowing for quantitative (not just qualitative) reading of the data [3].

Table 2: Application of Color Scales in Gene Expression Heatmaps

| Color Scale Type | Typical Data | Visual Interpretation | Common Color Palette Example |

|---|---|---|---|

| Sequential | Normalized Counts (TPM), Expression Z-scores | Light color = Low expression; Dark color = High expression | Viridis, Grays, Blues (single hue) [4] |

| Diverging | Log2 Fold Change (Treatment/Control), Mean-centered data | Blue = Down-regulation; White = No change; Red = Up-regulation [1] [2] | Blue-White-Red, Blue-Black-Yellow [5] |

The logic for selecting an appropriate color scale based on the data's characteristics and biological question is outlined below.

Experimental Protocol: From Data to Clustered Heatmap

Creating a publication-quality clustered heatmap for gene expression analysis involves a series of methodical computational steps. The following protocol outlines a standard workflow using the R programming language and the ComplexHeatmap package, which offers extensive control for biological data [5].

Data Preprocessing

- Input Data: Begin with a numeric matrix of normalized expression values (e.g., TPM from RNA-seq, normalized intensities from microarrays). Rows are genes, columns are samples.

- Transformations: For comparing across genes or samples, apply transformations. Often, a row-wise Z-score standardization (mean-centering and scaling by standard deviation) is performed to visualize which genes are expressed above or below their own mean across samples.

- Filtering: Filter genes to reduce noise and focus on relevant features, typically by variance (keeping the top n most variable genes) or by statistical significance (e.g., genes with adjusted p-value < 0.05 from a differential expression test).

Clustering Analysis

Clustering is the computational heart of pattern discovery [1] [2].

- Distance Calculation: For both rows and columns, calculate a pairwise distance matrix. Common metrics include Euclidean distance (for Z-scores) or 1 - Pearson correlation (to cluster by expression profile shape).

- Hierarchical Clustering: Apply a hierarchical clustering algorithm (e.g., Ward's method, complete linkage) to the distance matrices. This process generates dendrograms that diagrammatically represent the nested relationships of similarity.

Visualization Code Template

The R code below demonstrates core steps using ComplexHeatmap [5].

The Scientist's Toolkit: Essential Reagents & Software

Successful heatmap generation and interpretation rely on both wet-lab reagents for data generation and dry-lab software tools for analysis.

Table 3: Essential Research Reagent Solutions and Software for Heatmap-Based Analysis

| Item Name | Category | Function / Purpose | Example / Note |

|---|---|---|---|

| RNA Extraction Kit | Wet-Lab Reagent | Isolates high-quality total RNA from cells or tissues for expression profiling. | Foundation for all downstream sequencing or array analysis. |

| mRNA Sequencing Library Prep Kit | Wet-Lab Reagent | Prepares cDNA libraries from RNA for next-generation sequencing (RNA-seq). | Generates the raw read data used to calculate expression values. |

| R/Bioconductor | Software Platform | Open-source environment for statistical computing and genomic data analysis. | Core platform for packages like ComplexHeatmap, limma, DESeq2 [5] [6]. |

| ComplexHeatmap R Package | Software Tool | Specialized R package for creating highly customizable and annotatable heatmaps. | Industry standard for creating publication-quality biological heatmaps [5]. |

| Cluster Profiler R Package | Software Tool | Performs gene set enrichment analysis on clustered gene lists from heatmaps. | Used for biological interpretation of gene clusters identified in the heatmap [2]. |

In the domain of genomics and molecular biology, the analysis of high-dimensional data, such as gene expression from RNA sequencing (RNA-Seq) or microarrays, presents a significant challenge. Exploratory data analysis is a critical first step for generating hypotheses, identifying patterns, and uncovering the biological narratives hidden within complex datasets. Within this context, the clustered heatmap has emerged as an indispensable visualization tool [3]. It transcends simple data representation by integrating statistical clustering with an intuitive color-based matrix, allowing researchers to simultaneously discern patterns across two key dimensions: samples (e.g., tissues, patients, experimental conditions) and features (e.g., genes, transcripts) [7].

A clustered heatmap is fundamentally a grid-based visualization where the color of each cell encodes a numerical value, such as a gene expression level (often as a Z-score or log2 fold-change) [3]. What distinguishes it from a standard heatmap is the addition of hierarchical clustering trees (dendrograms) on its rows and columns [8]. These dendrograms are computed using distance metrics (e.g., Euclidean, Pearson correlation) and linkage methods, dynamically reorganizing the matrix so that similar samples are placed adjacent to each other, and similarly expressed genes are grouped together [7]. This dual clustering reveals latent structures—identifying novel disease subtypes based on transcriptional profiles, pinpointing co-regulated gene modules that may share functional roles or regulatory mechanisms, and highlighting outliers or anomalous samples that deviate from expected patterns [9].

This technical guide frames the clustered heatmap not merely as a chart, but as a core analytical engine within the broader thesis of exploratory gene expression research. It is a bridge between raw data and biological insight, enabling the transition from unsupervised pattern discovery to focused, hypothesis-driven investigation. The following sections detail the mathematical foundations, practical implementation protocols, and advanced applications of clustered heatmaps, providing researchers and drug development professionals with the knowledge to leverage this powerful tool effectively.

Theoretical Foundations and Algorithmic Core

The construction of a biologically meaningful clustered heatmap relies on a series of deliberate choices regarding data transformation, distance measurement, and clustering strategy.

Data Preprocessing & Normalization: Raw gene expression counts (e.g., from RNA-Seq) are not directly suitable for visualization or distance calculation. A standard pipeline includes:

- Logarithmic Transformation: Applying

log2(count + 1)stabilizes variance across the wide dynamic range of expression data, preventing highly expressed genes from dominating the analysis. - Row-wise Z-score Standardization: For the gene dimension, expression values are often transformed to Z-scores (mean-centered and scaled by standard deviation). This ensures that the color mapping for each gene reflects its expression deviation from the average across all samples, highlighting relative up- or down-regulation, which is more interpretable than absolute expression levels.

- Filtering: Genes with very low expression or minimal variance across samples are typically filtered out, as they contribute little to distinguishing sample groups.

Distance Metrics & Clustering Algorithms: The choice of how to define "similarity" is paramount.

- Distance Metrics for Samples: Common choices include Euclidean distance (straight-line geometric distance) and 1 - Pearson correlation (which clusters samples based on the shape of their expression profiles rather than magnitude).

- Distance Metrics for Genes: Pearson or Spearman correlation is frequently used to group genes with co-expression patterns, suggesting coregulation.

- Clustering Algorithms: Hierarchical clustering is the most common method, which iteratively merges the most similar pairs of items (samples or genes) into a nested tree structure. The results are visualized as dendrograms. The choice of linkage criterion (e.g., complete, average, Ward's) determines how the distance between clusters is calculated and can affect the final grouping [3].

Color Semantics and Perception: The color palette is the primary channel for communicating quantitative information [10].

- Sequential Palettes: Used for representing expression Z-scores or log-fold changes from low to high. A common and perceptually uniform scheme uses shades from blue (low) through white (mid) to red (high) [10].

- Diverging Palettes: Ideal for highlighting deviation from a neutral point (e.g., zero in a fold-change matrix), using two contrasting hues to represent negative and positive values [7].

- Best Practices: Avoid non-linear "rainbow" palettes, as they can mislead perception. Always include a clear legend [10].

The logical flow from raw data to biological insight via a clustered heatmap is summarized below.

Flowchart: The Analytical Pipeline for Constructing a Clustered Heatmap

Quantitative Performance and Method Comparison

Selecting appropriate algorithms and parameters is critical. The table below summarizes key metrics and considerations for core components of the heatmap pipeline.

Table 1: Comparison of Clustering Algorithms and Distance Metrics for Gene Expression Data

| Component | Option | Typical Use Case | Advantages | Limitations/Considerations |

|---|---|---|---|---|

| Distance Metric (Genes) | Pearson Correlation | Identifying co-expressed gene modules [9]. | Scale-invariant, focuses on profile shape. Robust to technical noise. | Sensitive to outliers. Assumes linear relationship. |

| Spearman Correlation | Co-expression with potential non-linear relationships. | Non-parametric, rank-based, less sensitive to outliers. | Less statistical power than Pearson if data is linear. | |

| Distance Metric (Samples) | Euclidean Distance | Distinguishing samples with global expression differences. | Intuitive geometric distance. | Highly sensitive to expression magnitude and scale. |

| (1 - Pearson Correlation) | Grouping samples with similar transcriptional programs. | Focuses on relative expression patterns across genes. | May group samples with opposite but correlated patterns. | |

| Clustering Algorithm | Hierarchical Clustering | Exploratory analysis, generating dendrograms for heatmaps. | Provides a full hierarchy of relationships. Intuitive visualization. | Computationally intensive for very large datasets (O(n²)). |

| k-means | Defining a pre-specified number (k) of distinct clusters. | Computationally efficient for large datasets (O(n)). | Requires prior knowledge of 'k'. Sensitive to initial centroids. | |

| Linkage Method | Ward's Linkage | Creating compact, spherical clusters of similar size. | Minimizes within-cluster variance. Often yields clean partitions. | Biased towards spherical clusters. |

| Average Linkage | General-purpose clustering. | Compromises between single and complete linkage. Relatively robust. |

Table 2: Color Palette Performance Metrics for Scientific Visualization

| Palette Type | Example Colors (Low → High) | Best for Data Type | Perceptual Uniformity | Accessibility (Colorblind) | Recommendation |

|---|---|---|---|---|---|

| Sequential | #E3F2FD → #0D47A1 (Light to Dark Blue) | Unidirectional data (Expression Z-scores, density) [7]. | High (if using a single-hue progression). | Good for most types with sufficient luminance contrast. | Recommended. Use viridis or plasma palettes for optimal uniformity [10]. |

| Diverging | #EA4335 → #FFFFFF → #34A853 (Red-White-Green) | Data with a critical midpoint (Fold-change, deviation from control) [7]. | Moderate. Critical to have a neutral, distinct midpoint color. | Poor if using Red-Green; use Blue-Red or Purple-Green. | Use with clear midpoint. Avoid Red-Green for accessibility. |

| Qualitative | Distinct, unrelated colors (e.g., #EA4335, #FBBC05, #4285F4) | Categorical annotations (Tissue type, Phenotype). | Not applicable. | Requires careful selection of distinct hues. | Limit to ≤10 categories. Use for annotation bars only. |

Detailed Experimental Protocols

Protocol 1: Constructing a Clustered Heatmap from RNA-Seq Count Data

This protocol outlines the steps to generate a standard clustered heatmap from a matrix of RNA-Seq read counts using R and the pheatmap package.

Data Input and Preprocessing:

- Input: A numeric matrix

count_datawith genes as rows and samples as columns. Associated data frames for sample annotations (e.g.,df_samplewith columns for 'Diagnosis', 'Batch') and gene annotations. - Filtering: Remove genes with near-zero counts. A common threshold is keeping genes with >10 counts in at least n samples, where n is the size of the smallest group of interest.

- Transformation: Apply a variance-stabilizing transformation (e.g.,

DESeq2::vst()) or alog2(count + 1)transformation to the filtered matrix.

- Input: A numeric matrix

Row-wise Scaling and Clustering:

- Scaling: Calculate the Z-score for each gene across samples:

scaled_data <- t(scale(t(transformed_data))). - Clustering: Define clustering parameters. For genes (rows):

clust_row <- hclust(as.dist(1-cor(t(scaled_data), method="pearson")), method="ward.D2"). For samples (columns):clust_col <- hclust(dist(t(scaled_data), method="euclidean"), method="ward.D2").

- Scaling: Calculate the Z-score for each gene across samples:

Visualization with pheatmap:

r library(pheatmap) pheatmap(scaled_data, cluster_rows = clust_row, cluster_cols = clust_col, color = colorRampPalette(c("#0D47A1", "#FFFFFF", "#EA4335"))(100), annotation_col = df_sample, show_rownames = FALSE, # Typically hide gene names show_colnames = TRUE, fontsize_col = 8, main = "Clustered Gene Expression Heatmap")

Protocol 2: Advanced Analysis Using Weighted Gene Co-Expression Network Analysis (WGCNA) and Functional Genomic Imaging (FGI)

For deeper analysis of gene modules and their functional implications, the WGCNA-based FGI pipeline provides a robust framework [9].

WGCNA Network Construction and Module Detection:

- Input: Start with the filtered, normalized expression matrix (e.g., log2(TPM+1)) from a relevant set of samples.

- Soft-Thresholding: Choose a soft-power threshold (β) to achieve a scale-free network topology (R² > 0.85). This emphasizes strong correlations while penalizing weak ones.

- Calculate Adjacency & TOM: Construct an adjacency matrix

a_ij = |cor(gene_i, gene_j)|^β, then transform it into a Topological Overlap Matrix (TOM), which measures network interconnectedness [9]. - Module Detection: Perform hierarchical clustering on the TOM-based dissimilarity (

dissTOM = 1 - TOM). Dynamic tree cutting is used to identify co-expression modules (branches of the dendrogram), each assigned a color label (e.g., "MEblue", "MEbrown").

Functional Annotation and Heatmap Integration:

- Module Eigengene Calculation: For each module, compute the first principal component (module eigengene, ME), representing the module's expression profile across samples.

- Generate Module Heatmaps: Create a clustered heatmap where rows are the module eigengenes (or the average expression of key genes in the module) and columns are samples. This high-level view shows how entire functional programs correlate across samples.

- Functional Enrichment: Run enrichment analysis (e.g., via Metascape [9]) on genes within each significant module against databases like GO, KEGG, and Reactome. Annotate the heatmap with these functional terms.

The workflow for this advanced integrative analysis is illustrated below.

Workflow: Advanced Co-expression Analysis via WGCNA and Functional Heatmapping

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Bioinformatics Tools and Reagents for Clustered Heatmap Analysis

| Tool/Resource Category | Specific Tool/Software | Primary Function in Analysis | Key Consideration |

|---|---|---|---|

| Primary Analysis Suites | R/Bioconductor | Core environment for statistical analysis, normalization (DESeq2, limma), and network construction (WGCNA) [9]. |

Steep learning curve. Extensive package ecosystem. |

| Python (SciPy, scikit-learn) | Alternative for matrix operations, machine learning, and custom pipeline development. | Growing bioinformatics support (e.g., scanpy for single-cell). | |

| Specialized Clustering & Visualization | Morpheus (Broad Institute) | Interactive web-based tool for generating and exploring clustered heatmaps. | User-friendly, no coding required. Excellent for sharing and exploration. |

| ComplexHeatmap (R) | Highly flexible R package for annotating and composing multi-panel heatmaps. | Allows intricate sample/gene annotations and integration of multiple data types. | |

| ClustVis | Web tool for uploading data and creating PCA plots and heatmaps with clustering. | Quick, simple deployment for initial data exploration. | |

| Functional Annotation Databases | Metascape | Automated meta-analysis tool for GO, pathway, and process enrichment of gene lists [9]. | Provides concise, interpretable outputs for module annotation. |

| DAVID, g:Profiler | Alternative comprehensive functional enrichment analysis suites. | Well-established with extensive annotation sources. | |

| Data Sources | The Cancer Genome Atlas (TCGA) | Primary source for public, clinical-grade cancer transcriptomics data [9]. | Includes linked clinical and molecular data for validation. |

| Gene Expression Omnibus (GEO) | Archive for functional genomics data from diverse organisms and experimental designs. | Requires careful curation of metadata for sample grouping. |

Applications in Drug Development and Translational Research

Clustered heatmaps move beyond basic visualization to drive decision-making in pharmaceutical research.

- Biomarker Discovery and Patient Stratification: By clustering tumor transcriptomes, heatmaps can reveal molecular subtypes that are not histologically apparent. These subtypes often have distinct clinical outcomes and drug sensitivities. For example, a heatmap might separate a seemingly uniform cancer into "immune-hot" and "immune-cold" subtypes, directly informing patient selection for immunotherapy trials [9].

- Mechanism of Action (MoA) Elucidation: Comparing gene expression profiles of cells treated with a compound versus controls via a clustered heatmap can reveal the compound's impact on specific pathways. Clustered gene modules that are up- or down-regulated can be linked to known pathways (e.g., apoptosis, cell cycle, oxidative stress), helping to hypothesize and validate the drug's MoA.

- Toxicogenomics and Safety Profiling: Heatmaps are used to assess off-target effects by clustering expression data from tissues exposed to a drug candidate. Patterns that cluster with known toxicants can signal potential safety issues early in development.

- Integrative Multi-Omics Analysis: Advanced heatmaps can incorporate annotation tracks from multi-omics data (e.g., mutation status, copy number variation, protein abundance) alongside the primary expression matrix. This allows for the visual correlation of a transcriptional subtype with specific genomic drivers or proteomic features, offering a systems-level view of disease biology.

The clustered heatmap stands as a foundational pillar in the exploratory analysis of gene expression data. Its power lies in its dual capacity for visualization and discovery—transforming a massive numeric matrix into an intelligible map of biological relationships. As part of a broader analytical thesis, it facilitates the identification of sample similarities that may define new disease classifications and uncovers gene co-expression patterns that hint at shared regulatory logic and function.

Mastering its construction—from appropriate data normalization and algorithmic choice to perceptually sound color mapping—is essential for deriving robust, interpretable insights. When coupled with advanced methods like WGCNA and functional enrichment, the clustered heatmap evolves from a descriptive tool into a generative platform for biological hypothesis generation. For researchers and drug developers, proficiency in creating and interpreting these visualizations is not merely a technical skill but a critical component of the modern toolkit for translating complex genomic data into actionable biological understanding and therapeutic strategies.

Within the framework of exploratory gene expression analysis research, heatmaps serve as an indispensable bridge between complex numerical data and biological insight. These visualizations transform matrices of expression values—often comprising thousands of genes across multiple experimental conditions—into intuitive color-coded maps. The effectiveness of this translation hinges on the careful application of color scales, which encode differential expression magnitudes, statistical significance, and temporal dynamics. When designed with precision, a heatmap does more than display data; it reveals patterns of co-regulation, highlights outlier samples, and guides hypothesis generation for downstream validation. This technical guide examines the principles, methodologies, and latest tools for creating robust, informative, and accessible color visualizations of differential expression, positioning heatmaps as a critical component in the analytical workflow from discovery to drug development [11] [12].

Foundational Principles of Color Scale Design for Biological Data

The translation of numerical fold-changes and p-values into color requires adherence to core visual and accessibility principles. A well-designed color scale must be perceptually uniform, meaning equal steps in data value correspond to equal perceptual steps in color, and intuitively ordered, often using a sequential (low to high) or diverging (negative to positive center) scheme.

A critical technical requirement is ensuring sufficient color contrast for readability and accessibility. The Web Content Accessibility Guidelines (WCAG) define minimum contrast ratios between foreground elements (like text or symbols) and their background [13] [14]. For standard text, a contrast ratio of at least 4.5:1 is required (WCAG AA), with an enhanced threshold of 7:1 for higher compliance (WCAG AAA). "Large text" (generally ≥18pt or ≥14pt bold) requires a minimum ratio of 3:1 [15]. These rules are directly applicable to heatmap annotations, axis labels, and legends.

For the color scales themselves, contrast between adjacent color bands is vital for accurate value discrimination. This guide utilizes a defined color palette derived from established design systems, ensuring visual consistency and adequate differentiation [16] [17].

- Primary Data Colors:

#4285F4(Blue),#EA4335(Red),#FBBC05(Yellow),#34A853(Green). - Neutral Backgrounds & Text:

#FFFFFF(White),#F1F3F4(Light Grey),#202124(Dark Grey),#5F6368(Medium Grey).

When applying this palette, text color (fontcolor) must be explicitly set against a node's background (fillcolor) to maintain high contrast [13]. For instance, dark grey (#202124) text on a white (#FFFFFF) or yellow (#FBBC05) background provides excellent contrast, while white text on a light blue (#4285F4) background is also highly legible.

Methodologies & Experimental Protocols for Advanced Visualization

Modern differential expression visualization extends beyond static heatmaps. The following sections detail protocols for implementing cutting-edge tools that address specific analytical challenges.

Protocol: Differential Analysis and Heatmap Generation with DgeaHeatmap

The DgeaHeatmap R package provides a streamlined, server-independent workflow for end-to-end analysis, from raw counts to publication-ready visualizations [11].

1. Input Data Preparation:

- For normalized data: Load a CSV file containing gene names, sample names, and normalized expression counts into R.

- For raw Nanostring GeoMx Digital Spatial Profiling (DSP) data: Load the required DCC (data), PKC (probe kit), and XLSX (annotation) files.

2. Data Preprocessing & Matrix Construction:

- Use the

build_matrix()function to convert the loaded data into an expression matrix. - Filter for the top n most variably expressed genes across samples using

filtering_for_top_exprsGenes(). - Apply Z-score normalization to counts across samples for comparative scaling with

scale_counts().

3. Differential Expression Analysis (Optional but Recommended):

- Perform statistical testing using an integrated method (

limma voom,DESeq2, oredgeR). - Extract and filter lists of differentially expressed genes (DEGs) based on log2 fold-change and adjusted p-value thresholds.

4. Clustering & Visualization Optimization:

- Visualize data distribution with

show_data_distribution()to assess normality. - Generate an elbow plot to determine the optimal number of clusters (k) for k-means clustering by plotting within-cluster variance against k.

- Create the final heatmap using functions like

print_heatmap()oradv_heatmap(). Automatically annotate clusters with the genes showing the highest variance within each cluster.

The following diagram illustrates this integrated workflow.

Protocol: Pathway-Centric Visualization with Pathway Volcano

The Pathway Volcano tool addresses the overplotting in standard volcano plots by filtering data through biological pathways [18].

1. Tool Setup:

- Install the R Shiny package from GitHub. Ensure required dependencies (

ggplot2,plotly,shiny,dplyr,ReactomeContentService4R) are installed. - Load a differential expression results table containing gene identifiers, log2 fold-change values, and p-values.

2. Interactive Pathway Filtering:

- Launch the Shiny application locally. The interface connects to the Reactome pathway knowledgebase via API.

- Use the search and selection tools to choose one or more pathways of interest (e.g., "Inflammatory Response" or "Cell Cycle").

3. Visualization and Interpretation:

- The tool dynamically regenerates the volcano plot, displaying only genes associated with the selected pathways. This declutters the view.

- Interact with the plot: hover to see gene details, click to select points, and zoom into regions of interest.

- Download the filtered gene list or a high-resolution PNG of the plot for documentation.

Protocol: Analyzing Temporal Dynamics with Temporal GeneTerrain

Temporal GeneTerrain visualizes the continuous evolution of gene expression over time within a fixed network layout [19].

1. Data Normalization and Gene Selection:

- Apply Z-score normalization to expression values across all time points for each gene.

- Select the top 1,000 most variably expressed genes over time.

- Calculate pairwise Pearson correlation coefficients and retain genes with strong co-expression (e.g., r ≥ 0.5), ensuring coordinated dynamics.

2. Network Construction and Layout Freezing:

- Build a protein-protein interaction (PPI) network for the selected co-expressed genes using a reference database.

- Embed the network into a 2D layout using a force-directed algorithm (e.g., Kamada-Kawai). Crucially, this node layout is calculated once and frozen for all subsequent time points to enable direct comparison.

3. Dynamic Terrain Generation:

- For each experimental condition and time point, map the normalized expression values onto the frozen network layout.

- Represent expression as a Gaussian density field (with a defined sigma, e.g., σ=0.03) centered at each gene's node, creating a smooth "terrain" where hills represent high expression and valleys represent low expression.

- Generate a series of terrains across the time course to visualize waves of expression change propagating through the molecular network.

The methodology integrates data processing, network analysis, and spatial visualization.

Comparative Analysis of Visualization Tools

Selecting the appropriate visualization tool depends on the biological question, data structure, and desired insight. The table below compares the core tools discussed [11] [18] [19].

Table 1: Comparative Feature Matrix of Differential Expression Visualization Tools

| Tool / Method | Primary Function | Key Strengths | Ideal Use Case |

|---|---|---|---|

| DgeaHeatmap [11] | End-to-end DEG analysis & static heatmap generation | Server-independent; integrates preprocessing, DEG testing, and clustering; highly customizable. | Generating publication-quality heatmaps from raw or normalized count data. |

| Pathway Volcano [18] | Interactive, pathway-filtered volcano plots | Reduces visual clutter; integrates biological context via Reactome; interactive exploration. | Interpreting DEG lists in the context of specific pathways or biological processes. |

| Temporal GeneTerrain [19] | Dynamic visualization of expression over a network | Captures continuous temporal dynamics; reveals network-propagation of signals; fixed layout enables comparison. | Analyzing time-series or dose-response data to understand cascade effects and delayed responses. |

| bigPint [12] | Interactive multi-plot diagnostics for RNA-seq | Detects normalization issues and model artifacts; uses parallel coordinate plots for cluster diagnosis. | Quality control and diagnostic checking of differential expression analysis results. |

The Scientist's Toolkit: Essential Research Reagent Solutions

The implementation of the protocols above relies on a combination of wet-lab platforms, bioinformatics software, and reference databases. The following table details key components of the modern visualization toolkit.

Table 2: Key Research Reagent Solutions for Advanced Expression Visualization

| Category | Item / Resource | Function & Role in Visualization |

|---|---|---|

| Spatial Profiling Platform | Nanostring GeoMx Digital Spatial Profiler (DSP) [11] | Generates spatially resolved, whole transcriptome RNA expression data from tissue sections. Provides the raw count data (DCC files) that serve as primary input for spatial heatmap analysis. |

| Core Analysis Software | R Statistical Environment & RStudio [11] [18] | The foundational computational environment for executing statistical analysis, implementing visualization algorithms, and running interactive Shiny apps. |

| Differential Analysis Packages | limma, DESeq2, edgeR [11] |

Industry-standard R packages for robust statistical identification of differentially expressed genes from count data. Their output (logFC, p-values) is the numerical basis for color mapping. |

| Visualization Packages | ComplexHeatmap, ggplot2, plotly [11] [18] |

Specialized R libraries that provide the low-level and high-level functions for constructing static and interactive plots, including heatmaps and volcano plots. |

| Pathway Knowledgebase | Reactome Pathway Database [18] | A curated, peer-reviewed database of biological pathways. Used via its API to map gene lists to functional annotations, enabling pathway-guided visualization and interpretation. |

| Interaction Reference | Protein-Protein Interaction Networks (e.g., STRING, BioGRID) [19] | Databases of known and predicted molecular interactions. Provide the network topology required for methods like Temporal GeneTerrain, placing expression data in a systems biology context. |

The transition from numerical differential expression data to color visualizations is a critical, non-trivial step in genomic research. As demonstrated, contemporary tools like DgeaHeatmap, Pathway Volcano, and Temporal GeneTerrain move beyond simple plotting to offer integrated, biologically contextualized, and interactive exploration [11] [18] [19]. Adherence to design principles—especially perceptual color scaling and accessibility contrast standards—ensures these visualizations are both scientifically accurate and universally interpretable [13] [15] [14]. Within the broader thesis of exploratory analysis, strategic visualization acts as a powerful hypothesis engine. By effectively translating numbers into colors, researchers and drug developers can discern subtle patterns, validate analytical models, and ultimately prioritize the most promising targets and biomarkers for further experimental investigation and therapeutic development.

Within the broader thesis on the role of visualization in exploratory data analysis, heatmaps are not merely illustrative outputs but are fundamental, interactive instruments for biological discovery. In the context of high-dimensional genomics, they transform abstract matrices of gene expression values into intuitive, color-coded maps that reveal the latent structure of data [20]. When integrated with dendrograms from hierarchical clustering, heatmaps enable researchers to simultaneously visualize patterns across both samples (columns) and genes (rows), making them indispensable for the initial identification of potential disease subtypes [20] [21]. This visual synthesis guides the formulation of biological hypotheses regarding shared pathophysiology, prognostic differences, and therapeutic vulnerabilities among clustered patient groups. This case study elucidates the complete technical workflow, from raw data to validated subtypes, demonstrating how heatmaps anchor the iterative process of unsupervised clustering and biological interpretation in precision medicine.

Methodological Foundations: From Data to Clusters

The reliable discovery of disease subtypes hinges on a rigorous, multi-stage analytical workflow. Each stage involves critical decisions that directly impact the validity and biological relevance of the resulting clusters.

Core Unsupervised Clustering Workflow

The process of subtype discovery follows a systematic pipeline, from data curation to biological validation. The following diagram outlines the four major phases and their key decision points.

Diagram 1: The four-phase workflow for unsupervised disease subtype discovery [22] [23].

Detailed Experimental Protocols

Protocol 1: Data Preparation and Feature Selection for RNA-Seq Data

- Objective: To transform raw count data into a normalized, feature-selected matrix suitable for clustering.

- Input: Raw gene expression count matrix (e.g., from RNA sequencing) [23].

- Procedure:

- Pre-filtering: Remove genes with low expression. A common threshold is keeping genes with a count of 10 or higher in at least 10% of samples [23].

- Normalization & Transformation: Apply a variance-stabilizing transformation (VST) using the

vstfunction from the DESeq2 R package. Alternatively, uselog2(FPKM+1)orlog2(RSEM+1)for depth-normalized data [23]. - Feature Selection: Reduce dimensionality by selecting genes with high biological variability. Calculate the Median Absolute Deviation (MAD) for each gene across samples and select the top 2,000-6,000 MAD-ranked genes [23]. This focuses the analysis on the most informative features.

- Output: A normalized numerical matrix (samples x selected genes).

Protocol 2: Consensus Clustering for Robust Subtype Identification

- Objective: To identify stable and robust patient clusters using a resampling-based approach.

- Input: Prepared numerical matrix from Protocol 1.

- Procedure [22] [23]:

- Subsampling: Repeatedly (e.g., 10,000 times) sample a proportion (e.g., 80%) of the patients and/or features.

- Clustering Each Iteration: For each subsample, apply a base clustering algorithm (e.g., Partitioning Around Medoids - PAM) for a proposed number of clusters k.

- Build Consensus Matrix: For each pair of patients, calculate the consensus index—the proportion of subsamples in which they are assigned to the same cluster. This forms a consensus matrix where values range from 0 (never clustered together) to 1 (always clustered together).

- Determine Optimal k: Repeat steps 1-3 for a range of k (e.g., 2 to 10). The optimal k is chosen by evaluating:

- Output: A final cluster assignment (subtype label) for each patient at the optimal k.

Protocol 3: Heatmap Generation for Integrated Visualization

- Objective: To create a publication-quality heatmap that integrates expression patterns, cluster assignments, and annotations.

- Input: Normalized expression matrix and cluster assignment labels.

- Procedure using

pheatmapR Package [20] [21]:- Scale Data: Typically, scale expression values by row (gene) using Z-score to highlight relative expression patterns across samples:

z-score = (value - mean) / standard deviation[20]. - Configure Heatmap:

- Enhance with ComplexHeatmap: For advanced annotations and multiple data integrations, the

ComplexHeatmappackage offers superior flexibility [21] [24].

- Scale Data: Typically, scale expression values by row (gene) using Z-score to highlight relative expression patterns across samples:

- Output: A composite heatmap figure displaying coordinated gene expression patterns aligned with sample clusters and metadata.

The Scientist's Toolkit: Essential Research Reagents & Software

Table 1: Key software tools and packages for unsupervised clustering and visualization.

| Tool/Package Name | Category | Primary Function | Key Application in Workflow |

|---|---|---|---|

| R/Bioconductor | Programming Environment | Statistical computing and genomics analysis. | Core platform for executing the entire workflow [20] [23]. |

| DESeq2 | R/Bioconductor Package | Differential expression and data normalization. | Performing variance-stabilizing transformation (VST) on raw RNA-seq counts [23]. |

| ConsensusClusterPlus | R/Bioconductor Package | Resampling-based clustering. | Implementing consensus clustering to determine stable patient subtypes [22] [23]. |

| pheatmap | R Package | Static heatmap generation. | Creating clear, publication-ready heatmaps with integrated dendrograms and annotations [20] [21]. |

| ComplexHeatmap | R/Bioconductor Package | Advanced heatmap visualization. | Building highly complex and annotated heatmaps, integrating multiple data types [21] [24]. |

| MATLAB | Programming Environment | Image processing and numerical analysis. | Extracting quantitative imaging features from medical scans (e.g., MRI) [22]. |

Case Study: Unsupervised Clustering of Breast Cancer Imaging Phenotypes

This seminal study demonstrates the application of the above workflow to discover novel breast cancer subtypes using quantitative magnetic resonance imaging (MRI) features, subsequently validating their prognostic and biological relevance [22].

Study Design and Data Processing

The analysis was conducted in three sequential phases across multiple patient cohorts [22]:

- Subtype Discovery & Validation: Unsupervised clustering was performed independently on a discovery cohort (n=60) and a validation cohort from The Cancer Genome Atlas (TCGA, n=96).

- Prognostic Validation: The association of imaging subtypes with Recurrence-Free Survival (RFS) was tested in the discovery cohort and five additional gene expression cohorts (n=1,160).

- Biological Interpretation: Underlying molecular pathways associated with each imaging subtype were elucidated.

From each patient's dynamic contrast-enhanced (DCE)-MRI scan, 110 quantitative image features were extracted, characterizing tumor morphology, intra-tumor heterogeneity of contrast kinetics, and enhancement of the surrounding parenchyma [22].

Application of Consensus Clustering

Researchers applied consensus clustering (PAM algorithm, Euclidean distance, 10,000 bootstraps) to the imaging features. The process for determining the optimal number of clusters is visually summarized below.

Diagram 2: The consensus clustering workflow used to determine the optimal number (k) of imaging subtypes [22] [23].

Key Findings and Validation

The analysis identified three robust imaging subtypes: 1) Homogeneous intratumoral enhancing, 2) Minimal parenchymal enhancing, and 3) Prominent parenchymal enhancing [22].

Table 2: Prognostic performance of breast cancer imaging subtypes in independent cohorts.

| Patient Cohort | Sample Size (n) | 5-Year Recurrence-Free Survival (RFS) by Subtype | Log-rank P-value |

|---|---|---|---|

| Discovery (Imaging) Cohort [22] | 60 | Subtype 1: 79.6%Subtype 2: 65.2%Subtype 3: 52.5% | 0.025 |

| Aggregate Gene Expression Cohorts [22] | 1,160 | Subtype 1: 88.1%Subtype 2: 74.0%Subtype 3: 59.5% | <0.0001 to 0.008 |

The imaging subtypes provided prognostic information independent of standard clinicopathological factors (multivariable hazard ratio = 2.79, P=0.016) and were associated with distinct dysregulated molecular pathways, suggesting potential therapeutic targets [22].

Integrated Visualization: The Heatmap as an Analytical Engine

The final heatmap is the synthesis point of the analysis. It serves as a visual proof of concept, displaying how the clustered samples align with the high-dimensional data that defined them. The creation of such an integrated visualization involves a specific pipeline.

Diagram 3: Pipeline for constructing an integrated heatmap that synthesizes clustered data with annotations.

In the breast cancer case study, the final heatmap would display the 110 imaging features (rows) across patient samples (columns), with columns ordered by the three identified subtypes [22]. The color gradient would reveal the specific imaging phenotype—such as heterogeneous wash-in kinetics or pronounced surrounding enhancement—that characterizes each subtype group. This visual output directly links the abstract statistical cluster to an interpretable biological phenotype.

This case study underscores that unsupervised clustering is a hypothesis-generating engine, with the heatmap serving as its indispensable visual interface. The methodology, from rigorous data preparation and consensus clustering to multi-layered validation, provides a template for discovering biologically and clinically relevant disease subtypes across omics and imaging data. The resulting subtypes, such as the three breast cancer imaging classes, offer more than just prognostic stratification; they reveal distinct biological states that may inform tailored therapeutic strategies. As data complexity grows, these integrative approaches, centered on robust clustering and clear visualization, will remain pivotal in advancing personalized medicine.

Abstract Within exploratory gene expression analysis, the transition from raw high-dimensional data to biological insight is a fundamental challenge. This technical guide posits that the heatmap is not merely a visualization tool but a critical engine for hypothesis generation. By transforming normalized expression matrices into intuitive color-encoded landscapes, heatmaps facilitate the visual detection of co-expression patterns, sample clusters, and outlier behaviors that form the cornerstone of testable biological hypotheses [20] [2]. Framed within a broader thesis on analytical workflow efficiency, this whitepaper details the methodologies for constructing and interpreting clustered heatmaps, provides explicit experimental protocols, and outlines how resultant visual patterns directly inform subsequent research directions in genomics and drug development.

Exploratory Data Analysis (EDA) is the critical first step in extracting meaning from complex datasets, focusing on summarizing main characteristics, often with visual methods. In genomics, where datasets comprise thousands of genes (variables) across multiple samples (observations), EDA faces the unique challenge of dimensionality. Heatmaps address this by providing a compact, two-dimensional matrix representation where a third dimension—gene expression level—is encoded using a color gradient [3]. This allows researchers to perceive global patterns that would be imperceptible in tabular data.

In gene expression studies, such as those from RNA sequencing or microarray experiments, data is typically structured as a matrix with rows corresponding to genes and columns to samples [20] [2]. A standard heatmap displays this matrix, using color intensity (e.g., shades of red and blue) to represent normalized expression values, often transformed via Z-scoring to highlight relative up- or down-regulation within a gene across samples [20]. The primary analytical power, however, is unlocked through clustered heatmaps. These apply hierarchical clustering to both rows and columns, grouping genes with similar expression profiles and samples with similar transcriptional signatures [7]. The resulting dendrograms visually suggest relationships—for instance, which samples cluster by disease subtype or treatment response, or which genes co-express, implying coregulation or shared function [2]. This visual clustering is the foundational step for generating hypotheses about biological mechanisms, disease biomarkers, or drug effects.

Core Principles and Quantitative Foundations

The construction of an informative heatmap requires deliberate choices at each step, from data preprocessing to color mapping. The quantitative parameters governing these choices directly influence pattern detection and hypothesis generation.

Data Preprocessing and Normalization: Raw gene expression counts must be normalized to correct for technical variability (e.g., sequencing depth) before visualization. Common methods include reads per million (RPM), fragments per kilobase million (FPKM), or variance-stabilizing transformations. For heatmap visualization, row-wise Z-score standardization is frequently applied. This centers each gene's expression across samples to a mean of zero and a standard deviation of one, ensuring the color scale reflects relative expression and preventing genes with universally high expression from dominating the visual field [20].

Distance Metrics and Clustering Algorithms: Clustering is central to pattern discovery. The process requires a distance metric to quantify (dis)similarity and a linkage method to define cluster relationships.

- Distance Metrics: Common choices include Euclidean distance (for magnitude differences) and correlation-based distances (1 - Pearson/Spearman correlation), which are sensitive to expression profile shapes [20].

- Linkage Methods: This determines how the distance between clusters is calculated. Average linkage is robust, while complete linkage can produce tighter, more distinct clusters.

Table 1: Key Parameter Choices for Clustered Heatmap Generation

| Parameter | Common Options | Impact on Hypothesis Generation |

|---|---|---|

| Normalization | Z-score (row-wise), Log2(CPM+1), VST | Z-score highlights sample-specific deviation; log-transform manages skew. |

| Distance Metric | Euclidean, Manhattan, 1-Pearson Correlation | Correlation distance finds co-expressed genes; Euclidean is sensitive to magnitude. |

| Linkage Method | Average, Complete, Ward's | Affects cluster compactness and separation; Ward's minimizes within-cluster variance. |

| Color Palette | Sequential (viridis), Diverging (RdBu, RdYlBu) | Diverging palettes (red-blue) best display up/down-regulation relative to a midpoint [7]. |

Color Palette Selection: The choice of color scheme is not aesthetic but cognitive. A diverging palette (e.g., blue-white-red) is standard for Z-scored expression data, intuitively representing down-regulation (blue), baseline (white), and up-regulation (red) [7]. Ensuring sufficient color contrast is critical for accessibility and accurate interpretation. Following Web Content Accessibility Guidelines (WCAG), a minimum contrast ratio of 3:1 is recommended for graphical objects, which includes heatmap cells and their annotations [25] [26]. This ensures patterns are discernible to all viewers.

Experimental Protocols for Gene Expression Heatmaps

This section provides a detailed, executable protocol for generating and interpreting a clustered heatmap from a typical gene expression dataset, such as differential expression results.

3.1 Data Preparation and Preprocessing Protocol

- Input Data: Start with a normalized expression matrix (e.g., log2(CPM), TPM) for genes of interest (e.g., top 100 differentially expressed genes).

- Subset and Transform: Filter the matrix to include only relevant genes and samples. Apply row-wise Z-score transformation: for each gene, subtract the mean expression across all samples and divide by the standard deviation [20].

- Annotation Data: Prepare a sample annotation data frame (e.g., with columns for

Treatment,Disease_Stage,Batch).

3.2 Heatmap Generation Protocol (Using R/pheatmap)

The following code block outlines a standardized protocol using the robust pheatmap package in R [20].

3.3 Interpretation and Hypothesis Extraction Protocol Post-generation, systematic analysis of the heatmap is required.

- Examine Sample Clusters (Column Dendrogram): Identify groups of samples that cluster together. Correlate these clusters with annotation variables (e.g., do all "Treated" samples form one cluster? Does a cluster correspond to a specific disease subtype?). A clear partition forms a hypothesis about sample classification.

- Examine Gene Clusters (Row Dendrogram): Identify blocks of genes with similar expression patterns across samples. These gene modules are hypotheses for co-regulated biological pathways. Export genes from a cluster of interest for downstream functional enrichment analysis (e.g., using Gene Ontology) [2].

- Identify Outliers: Note samples or genes that do not cluster as expected. A sample grouping with the wrong phenotype may indicate mislabeling or a novel biological subgroup. A single gene with a unique pattern may be a key regulator.

From Visualization to Hypothesis: A Logical Workflow

The path from a completed heatmap to a formal biological hypothesis follows a defined logic. The visual patterns suggest relationships, which must be translated into testable statements.

Diagram: Logical workflow from heatmap generation to testable hypothesis.

Example Hypothesis Generation:

- Pattern: A distinct block of 15 genes shows consistent up-regulation in a cluster of 8 samples.

- Annotation Check: The 8-sample cluster corresponds perfectly to "Treatment Group B" and "Clinical Responders."

- Hypothesis: "The gene module defined by [list of 15 genes] is upregulated in response to Treatment B and mediates its therapeutic efficacy."

- Next-Step Validation: Perform pathway enrichment analysis on the 15-gene list (e.g., using Enrichr or GSEA) [2] and design experiments to inhibit key genes in the module to test for loss of treatment efficacy.

The Scientist's Toolkit: Essential Research Reagents & Solutions

Beyond software, effective hypothesis generation from heatmaps relies on a suite of analytical "reagents." The following table catalogs essential tools and their functions in the experimental workflow.

Table 2: Research Reagent Solutions for Heatmap-Based Analysis

| Category | Reagent/Tool | Function in Hypothesis Generation | Example/Note |

|---|---|---|---|

| Clustering & Distance | Hierarchical Clustering | Groups similar genes/samples to reveal patterns [20]. | Foundation of dendrogram generation. |

| Euclidean Distance Metric | Measures absolute expression differences. | Sensitive to magnitude; good for sample clustering. | |

| Correlation Distance Metric | Measures shape similarity of expression profiles [20]. | Ideal for finding co-expressed genes (gene module discovery). | |

| Statistical Validation | Gene Set Enrichment Analysis (GSEA) | Tests if a priori defined gene sets are enriched in expression ranks [2]. | Validates if gene clusters correspond to known pathways. |

| Over-Representation Analysis (ORA) | Tests if genes in a cluster are overrepresented in functional terms [2]. | Common for analyzing differentially expressed gene lists from clusters. | |

| Visualization & Annotation | Diverging Color Palette (RdBu) | Encodes relative expression (up/down) intuitively [7]. | Critical for accurate pattern perception. |

| Sample Annotation Bar | Visually maps metadata (phenotype, batch) to sample clusters. | Essential for correlating patterns with biological variables. | |

| Interactive Heatmap Library (heatmaply) | Allows mouse-over inspection of exact values in dense matrices [20]. | Facilitates detailed exploration and outlier identification. | |

| Downstream Analysis | Pathway Databases (KEGG, Reactome) | Provides biological context for gene clusters [2]. | Translates gene lists into mechanistic hypotheses. |

| Network Analysis Tools (Cytoscape) | Models interactions between genes from different clusters [2]. | Generates hypotheses about key regulatory hubs. |

Case Study: Hypothesis Generation in a Drug Treatment Study

Consider a published RNA-seq study investigating a novel oncology drug (Drug X) versus control in cell lines [20]. A clustered heatmap of the top 500 differentially expressed genes reveals:

- Sample-Level Clustering: Two primary sample clusters perfectly segregate Drug X-treated from control samples, with one treated sample (Sample T05) clustering with controls.

- Gene-Level Clustering: A prominent gene module (Module A) of ~50 genes shows strong up-regulation in the Drug X cluster, except in Sample T05.

- Hypotheses Generated:

- Primary Efficacy Hypothesis: Module A genes represent the transcriptional response signature to Drug X.

- Resistance Hypothesis: Sample T05 may be intrinsically resistant to Drug X, as suggested by its failure to upregulate Module A. This hypothesizes a potential resistance mechanism.

- Validation Pathway:

- Enrichment analysis on Module A reveals significant overrepresentation of "p53 signaling pathway" and "apoptosis execution phase" [2], supporting the drug's putative mechanism.

- In vitro validation shows knocking down a key Module A gene (e.g., BAX) attenuates Drug X-induced cell death, confirming the module's functional role.

Diagram: Case study of hypothesis flow from heatmap patterns to experimental conclusion.

Advanced Applications and Future Directions

Heatmaps are evolving beyond static representations. Interactive heatmaps (e.g., via heatmaply in R) allow researchers to probe individual data points, facilitating deeper exploration [20]. Integration with other visual analytics is powerful: for instance, overlaying heatmap-derived gene clusters onto protein-protein interaction networks can identify hub genes that are central to a response signature, offering more focused therapeutic hypotheses [2].

The future lies in multi-omic heatmaps, where concatenated or parallel heatmaps display matched transcriptomic, proteomic, and epigenomic data from the same samples. Discrepancies between layers—such as a gene cluster upregulated at the mRNA but not the protein level—can generate sophisticated hypotheses about post-transcriptional regulation. In drug development, this approach can illuminate mechanisms of action, patient stratification biomarkers, and combinatorial therapy targets with unprecedented clarity.

Advanced Heatmap Applications: From Single-Cell Analysis to Multi-Omics Integration

This guide provides an in-depth technical comparison of heatmap tools for exploratory gene expression analysis. Within a research thesis, heatmaps are a cornerstone visualization technique for identifying patterns, clusters, and biological signatures in high-dimensional genomic data [1] [2].

Comparative Analysis of Core Heatmap Tools

The selection of a heatmap tool depends on the complexity of the biological question and the required level of customization. The following table summarizes the core technical specifications and best-use applications for two leading R packages.

Table: Technical Comparison of ComplexHeatmap and pheatmap R Packages

| Feature | ComplexHeatmap | pheatmap | Primary Research Use Case |

|---|---|---|---|

| Primary Strength | Highly customizable, multi-panel integration [5] [27] | User-friendly, quick publication-ready plots [28] [29] | ComplexHeatmap: Multi-omics integration, complex annotations. pheatmap: Standard differential expression visualization. |

| Annotation System | Flexible, supports row, column, and multiple annotation blocks [5]. | Straightforward, uses data.frame for row/column annotations [28] [29]. |

Adding sample metadata (e.g., disease state) or gene attributes (e.g., pathway membership). |

| Clustering Control | Extensive control over distance metrics, methods, and rendering [5]. | Standard hierarchical clustering with basic parameters [28]. | Identifying co-expressed gene modules or sample subgroups. |

| Color Mapping | Robust via circlize::colorRamp2(); handles outliers and ensures comparability [5]. |

Simple vector of colors or built-in palettes (e.g., RColorBrewer) [28]. | Accurately representing log2 fold-change values or Z-scores. |

| Output & Integration | Heatmap object integrated with grid graphics; can combine multiple plots [5] [30]. |

Returns a list containing plot components; easier for single heatmaps [29] [31]. | ComplexHeatmap: Creating unified figures. pheatmap: Exporting a single heatmap image. |

Detailed Experimental Protocols

Protocol for Creating an Annotated Clustered Heatmap with pheatmap

This protocol is optimal for visualizing clustered gene expression patterns with sample metadata [28] [29].

Data Preparation and Normalization: Begin with a numeric matrix (genes as rows, samples as columns). Normalize data, often by row (gene) Z-score scaling to highlight relative expression patterns across samples [29] [31].

Create Annotation Data Frames: Prepare separate

data.frameobjects for row (gene) and column (sample) annotations. Row names must match matrix row/column names [28].Generate the Heatmap: Execute

pheatmap()with key arguments for clustering and annotation [28].

Protocol for Advanced Multi-Heatmap Figures with ComplexHeatmap

This protocol enables the creation of linked, multi-panel visualizations for complex data stories [5] [27].

Define Consistent Color Mapping: Use

colorRamp2()for a stable, outlier-resistant mapping of data values to colors [5].Build Individual Heatmap and Annotation Objects: Construct each component (main heatmap, side annotations) as separate objects [5].

Assemble and Draw the Complex Plot: Combine objects using the

+operator orht_list, and render withdraw()[5] [30].

Visualization of Analysis Workflow and Biological Integration

The following diagrams outline the standard analytical workflow for heatmap-based gene expression analysis and how heatmap-derived clusters feed into biological interpretation.

Heatmap Analysis Workflow in Gene Expression Studies

From Heatmap Clusters to Biological Pathways

This table details the essential computational "reagents" required to execute heatmap analysis in gene expression research.

Table: Essential Research Reagents for Heatmap Analysis

| Tool/Resource | Function in Analysis | Example/Format |

|---|---|---|

| Normalized Expression Matrix | The primary input data. Rows are genes, columns are samples. Values are normalized counts (e.g., TPM for RNA-seq) or log2 fold-changes. | Numeric matrix or data.frame in R. |

| Annotation Data Frames | Provides metadata for annotating rows (genes) and columns (samples) to add biological context [28] [29]. | R data.frame where row names match the expression matrix. |

| Color Mapping Function | Precisely maps numeric values to a color scale for consistent, interpretable visualization [5]. | circlize::colorRamp2() function in R. |

| Clustering Distance Metric | Defines how similarity between genes or samples is calculated for hierarchical clustering. | "euclidean", "correlation", or "manhattan". |

| Functional Annotation Database | Provides gene sets for interpreting clusters via enrichment analysis (post-heatmap) [2]. | MSigDB, Gene Ontology (GO), KEGG pathways. |

| R Color Palette Package | Supplies perceptually suitable color schemes for annotations and data representation. | RColorBrewer or viridis packages. |

1. Introduction: The Central Role of Heatmaps in Exploratory Single-Cell Analysis Within the broader thesis on exploratory gene expression analysis, heatmaps are not merely a visualization output but a fundamental hypothesis-generation engine. In single-cell RNA sequencing (scRNA-seq), where datasets routinely profile tens of thousands of genes across hundreds of thousands of cells, the core challenge is to reduce immense dimensionality into an interpretable format without losing biological signal [32]. Heatmaps directly address this by performing a dual function: they are both a summarizing tool, collapsing cellular heterogeneity into structured patterns of gene-cluster relationships, and a discovery tool, revealing gradients, outliers, and co-expression modules that guide subsequent analysis [33]. This guide frames heatmap generation as the critical juncture where quantitative computational processing meets qualitative biological interpretation, enabling researchers to transition from observing cell clusters to understanding the driving transcriptional programs behind them.

2. Technical Foundations: From Count Matrix to Interpretable Visualization The journey to a biological insightful heatmap begins with a high-dimensional count matrix, where standard dimensions (e.g., 20,000 genes x 10,000 cells) exhibit extreme sparsity (often >90% zero values) [32]. The foundational computational step is dimensionality reduction, which must balance the preservation of global and local data structure [32]. As shown in Table 1, the choice of reduction method, executed prior to heatmap creation, has profound implications for the integrity of the patterns visualized.

Table 1: Dimensionality Reduction Methods: Impact on Downstream Heatmap Integrity

| Method | Core Principle | Preservation Focus | Key Consideration for Heatmaps | Typical Runtime for 10k Cells |

|---|---|---|---|---|

| PCA (Principal Component Analysis) [34] | Linear projection to orthogonal axes of maximum variance. | Global structure. | Provides stable, reproducible input for clustering; less sensitive to noise. | ~1-2 minutes (CPU) |

| UMAP (Uniform Manifold Approximation and Projection) [32] | Non-linear manifold learning based on nearest neighbor graphs. | Local structure, with configurable global layout. | Can create visually distinct clusters; distances between clusters are not quantitatively meaningful. | ~5-10 minutes (CPU) |

| Deep Visualization (DV) - Euclidean [32] | Deep neural network trained to preserve a learned structural graph. | Balanced global & local structure. | Aims to minimize distortion; requires training but can correct batch effects end-to-end. | ~15-30 minutes (GPU recommended) |

| PHATE [32] | Diffusion geometry to capture transitional structures. | Trajectory and progression structure. | Ideal for heatmaps displaying continuous differentiation rather than discrete types. | ~10-15 minutes (CPU) |

Following reduction, cell clustering partitions the data. The resolution of this clustering (e.g., k in k-means, resolution in graph-based methods) is the single most important parameter determining a heatmap's content [35]. A low resolution yields broad, averaged expression patterns, while a high resolution may isolate rare populations but increase noise. The gap statistic or Gini impurity index can be used to quantitatively assess clustering robustness and purity, ensuring the clusters mapped onto the heatmap are statistically sound [35].

3. Data Processing and Quality Control Protocols A reliable heatmap is built on rigorously processed data. The following protocol outlines critical steps from raw data to a normalized matrix ready for visualization, synthesizing best practices from major pipelines [36].

Protocol 3.1: Pre-Heatmap Data Processing and QC Workflow

Input: Raw feature-barcode count matrices (e.g., from Cell Ranger) [36]. Tools: Seurat (R) or Scanpy (Python) environments [37].

Initial QC Filtering:

- Filter cells with an unusually low number of detected genes (<200-500) or high mitochondrial gene fraction (e.g., >10-20%), indicative of broken cells [33] [36]. For the example PBMC data in [36], a threshold of 10% mitochondrial reads was used.

- Filter genes detected in fewer than a minimal number of cells (e.g., 3 cells).

Normalization & Scaling:

- Normalize: Adjust raw counts for sequencing depth per cell (e.g., using log(1+CP10K) in Scanpy or

LogNormalizein Seurat). - Scale: Regress out unwanted sources of variation (e.g., mitochondrial percentage, cell cycle score) and scale gene expression to a mean of 0 and variance of 1 across cells. This ensures technical artifacts do not dominate heatmap patterns.

- Normalize: Adjust raw counts for sequencing depth per cell (e.g., using log(1+CP10K) in Scanpy or

Feature Selection:

- Identify Highly Variable Genes (HVGs), typically 2,000-5,000 genes that show the highest cell-to-cell variance. These genes form the feature set for dimensionality reduction and are the primary candidates for heatmap visualization [34].

Batch Effect Correction (if needed):

Dimensionality Reduction & Clustering:

- Perform PCA on the scaled HVG matrix.

- Construct a nearest-neighbor graph and cluster cells using algorithms like Louvain or Leiden [35].

- Generate 2D embeddings (UMAP, t-SNE) for cluster visualization.

Differential Expression & Marker Gene Identification:

- For each cluster, identify marker genes statistically over-expressed compared to all other cells using tests like the Wilcoxon rank-sum test.

- The top N marker genes per cluster (e.g., top 10) become the canonical gene list for a diagnostic cluster heatmap.

Output: A normalized expression matrix, cluster labels, and a ranked list of marker genes, serving as direct input for heatmap generation.

4. The Visualization Engine: Encoding, Layout, and Color Optimization The visual design of the heatmap is a critical analytical step. A standard workflow for creating an annotated heatmap from processed data is shown below, integrating clustering, annotation, and rendering.

Diagram: Standard Workflow for Generating an Annotated Single-Cell Heatmap. The process integrates biological data (genes, clusters) with visual encoding (ordering, color).

- Gene and Cell Ordering: The default layout orders cells by cluster membership and genes by their pattern of differential expression across these clusters. More advanced ordering can follow pseudotime inferred from trajectory analysis, creating a heatmap that visualizes dynamic gene expression along a biological process [32].

- Color Optimization: The color palette is a non-trivial parameter. A common issue in cluster visualization is assigning visually similar colors to spatially adjacent clusters, obscuring boundaries [38]. Tools like Palo algorithmically optimize palette assignment by calculating a spatial overlap score (Jaccard index) between clusters and maximizing color dissimilarity (RGB distance) for neighboring pairs [38]. For the heatmap body itself, a diverging color scheme (e.g., blue-white-red) is standard for normalized z-score expression, with the midpoint anchored at zero.

Table 2: Critical Color Parameters for Single-Cell Heatmaps

| Parameter | Purpose | Best Practice / Tool | Impact on Interpretation |

|---|---|---|---|

| Cluster Annotation Palette | Distinguishes cell groups in side annotations. | Use Palo [38] or DiscretePalette (Seurat) [39] to maximize contrast between adjacent clusters. |

Prevents misreading of cluster identity and boundaries. |

| Expression Gradient Palette | Encodes gene expression level. | Use perceptually uniform sequential (viridis) or diverging (RdBu) scales. Avoid rainbow scales. | Ensures accurate perception of expression differences and patterns. |

| Data Scaling for Color | Maps expression values to colors. | Center and scale per gene (z-scores) to highlight relative up/down-regulation. | Reveals which genes are drivers of each cluster's identity. |

5. Advanced Analytical Applications Heatmaps evolve from descriptive summaries to analytical instruments when used to investigate specific biological questions.

- Differential Expression Analysis: The standard method for identifying marker genes involves a statistical test between groups, the results of which are often visualized as a heatmap. The associated workflow from statistical testing to visualization is shown below.

Diagram: Differential Expression to Heatmap Workflow. A standard pipeline involves testing, filtering results, and visualizing top genes. Advanced methods like NBGMM account for complex experimental designs.

Temporal & Spatial Dynamics: For time-course or development data, cells ordered by pseudotime (rather than cluster) can be plotted in a heatmap to reveal gene modules with coherent temporal dynamics [32]. In spatial transcriptomics, heatmaps can display gene expression superimposed on tissue region annotations, directly linking heterogeneity to location.

Overcoming Scalability Limits: For datasets exceeding 1 million cells, traditional cell-by-gene heatmaps become impractical. Aggregated heatmaps, which display average expression per cluster, are a standard solution. Innovative methods like scBubbletree offer an alternative by visualizing clusters as "bubbles" on a dendrogram, with bubble size and color encoding summary statistics (e.g., mean expression, cell count), effectively creating a scalable, quantitive summary "heatmap" [35].

6. Implementation: The Scientist's Toolkit Translating theory into practice requires a coherent toolkit of software and reagents.

Table 3: Research Reagent & Computational Toolkit for scRNA-seq Heatmap Analysis

| Item / Tool Name | Category | Primary Function | Role in Heatmap Generation |

|---|---|---|---|

| Chromium Single Cell 3' Reagent Kits (10x Genomics) [36] | Wet-lab Reagent | Generate barcoded single-cell libraries for sequencing. | Produces the raw count matrix that forms the foundational data for all analysis, including heatmaps. |

| Cell Ranger [37] [36] | Primary Analysis Software | Processes raw FASTQ files to generate filtered gene-barcode matrices and perform initial clustering. | Creates the essential input file (filtered_feature_bc_matrix) for downstream tools like Seurat/Scanpy. |

| Seurat [40] [37] or Scanpy [37] | Core Analysis Platform | Comprehensive environment for QC, normalization, clustering, differential expression, and visualization in R/Python. | The primary workspace where data is processed, clustered, and from which the DoHeatmap() (Seurat) or pl.heatmap() (Scanpy) functions are called. |

| scViewer [40] | Interactive Visualization App | An R/Shiny tool for interactive exploration of differential expression and co-expression. | Allows dynamic filtering and heatmap generation based on statistical testing (NBGMM) without coding. |

| Palo [38] | Visualization Enhancement | R package for spatially-aware color palette optimization. | Ensures cluster annotations on heatmaps are colored for maximum visual discriminability. |

| Deep Visualization (DV) [32] | Advanced Dimensionality Reduction | A deep learning framework for structure-preserving, batch-corrected embeddings. | Generates improved low-dimensional coordinates that can lead to more biologically meaningful clustering and heatmap organization. |